The year 2024 has gone down in technology history as an era of bold experimentation, driven by a wave of generative artificial intelligence. Companies around the world immersed themselves in the possibilities of AI, launching countless pilot projects. However, 2025 brings a fundamental shift.

Enthusiasm, which analysts at Channelnomics described as “unprecedented” , is giving way to the hard reality of business. The time for monetisation and return on investment has arrived. Customers are no longer asking “will AI?”, but “how will AI translate into our profit?”.

For IT partners, this transformation opens up a new era of opportunity. They are becoming the key conduits for companies that must navigate an increasingly complex technology landscape. Success in 2025 will depend on the ability to respond to three powerful forces shaping the market: the shift towards profitable artificial intelligence, the imperative of cyber security as the foundation of every operation, and the maturity of the cloud, which is generating demand for new, high-margin optimisation services.

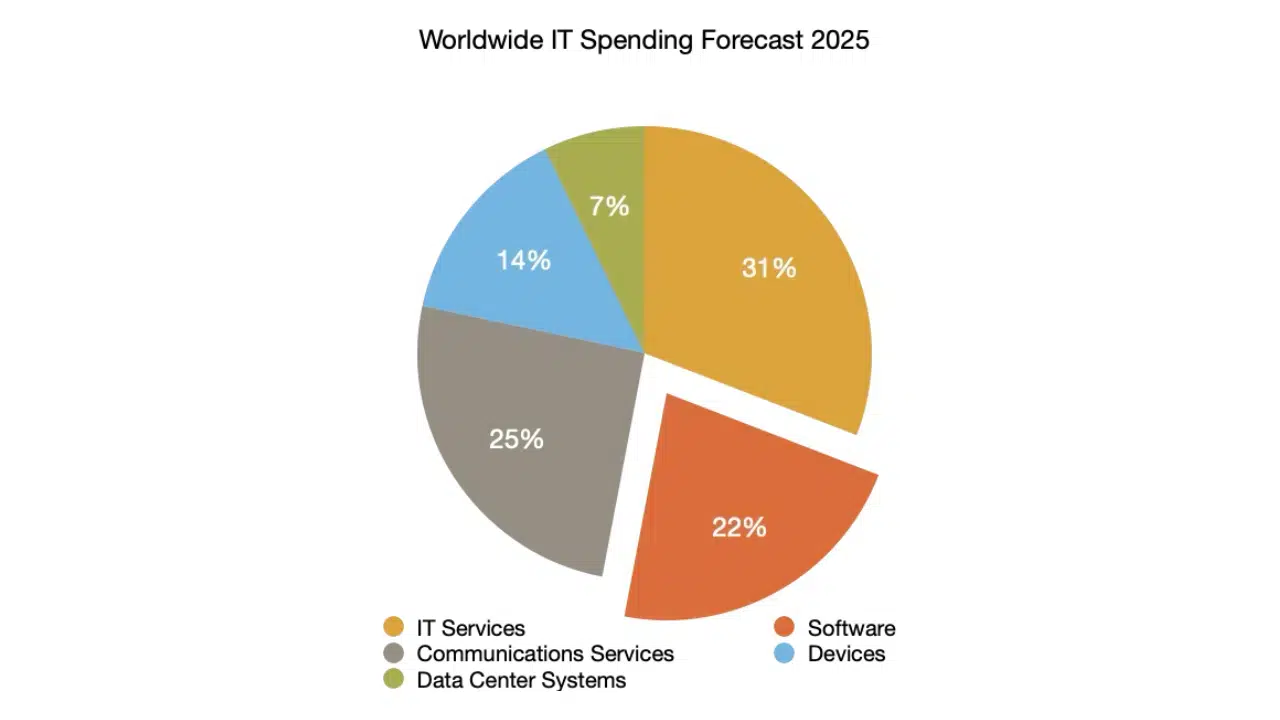

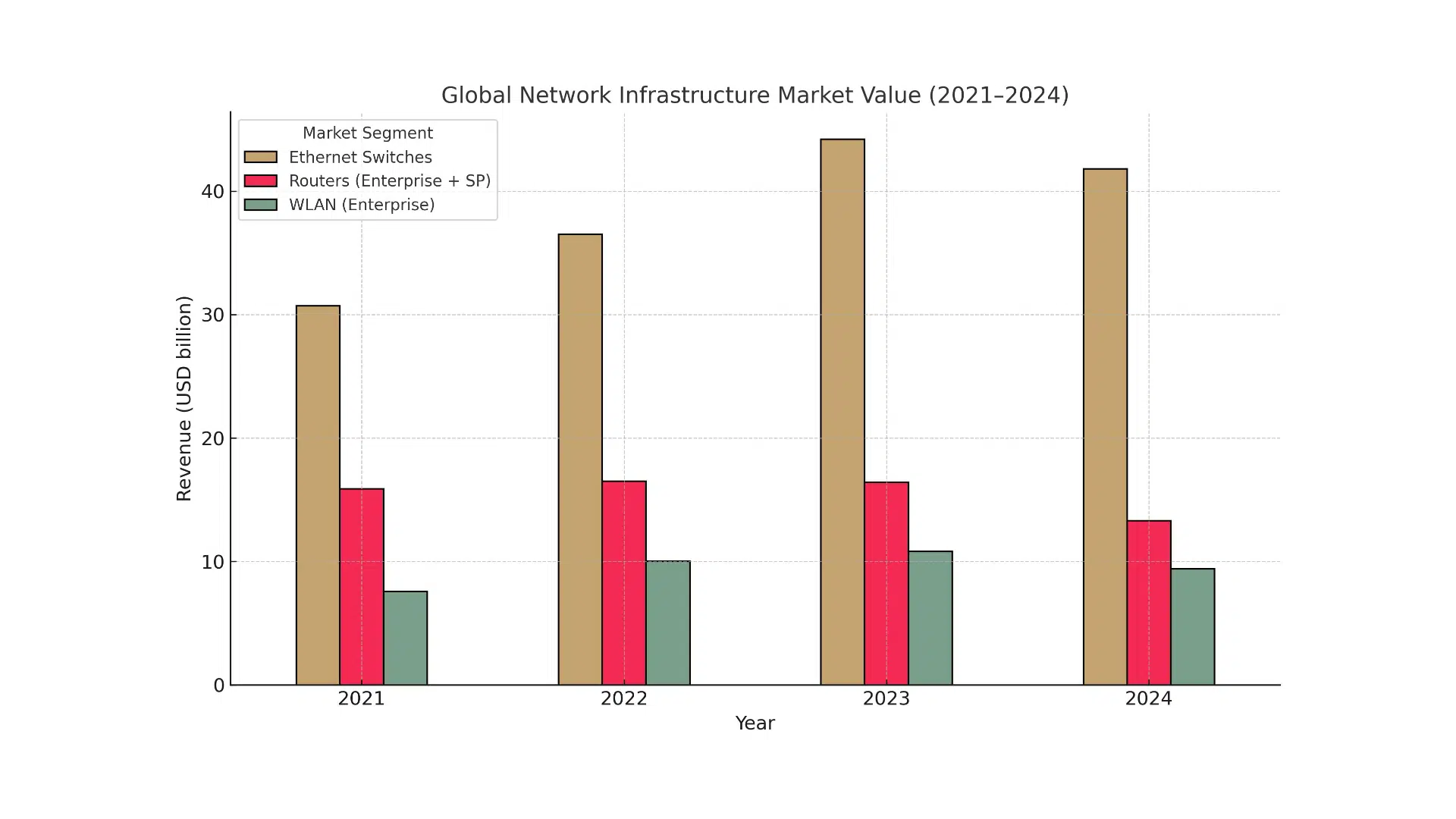

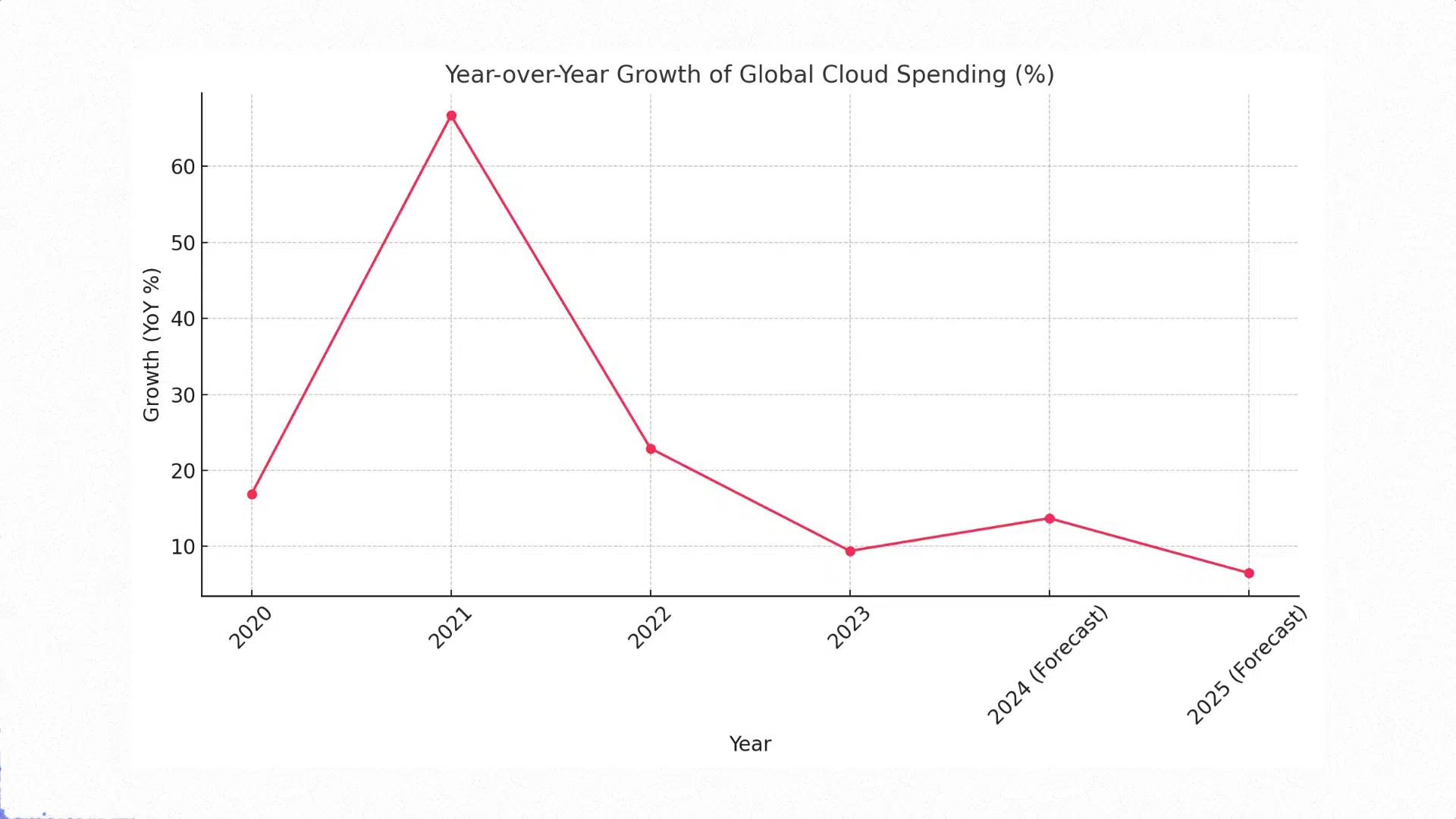

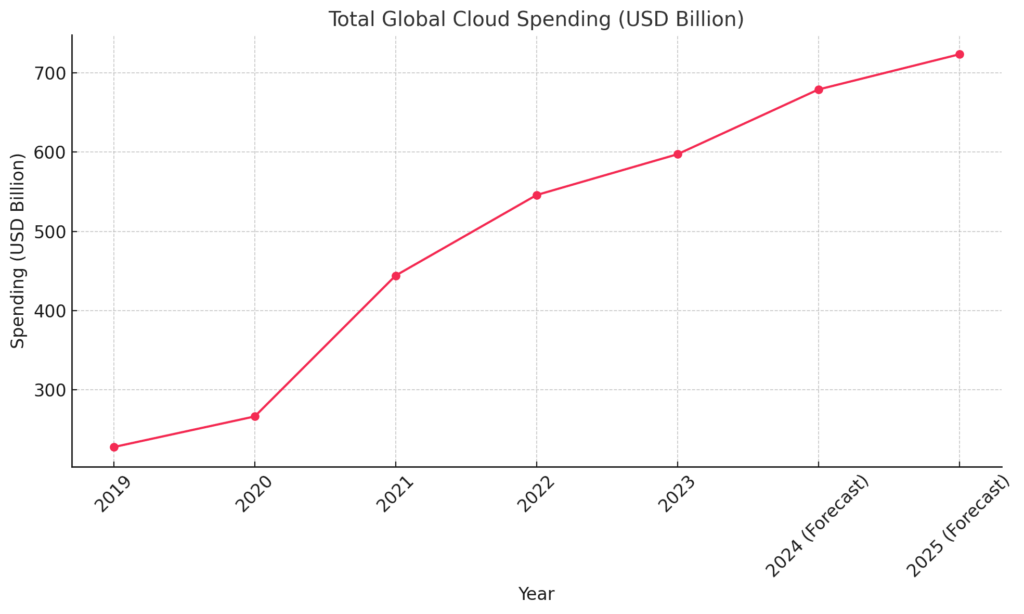

Leading analyst firms agree on the scale of the coming growth. Forecasts of global IT spending in 2025 point to a market worth more than $5 trillion.

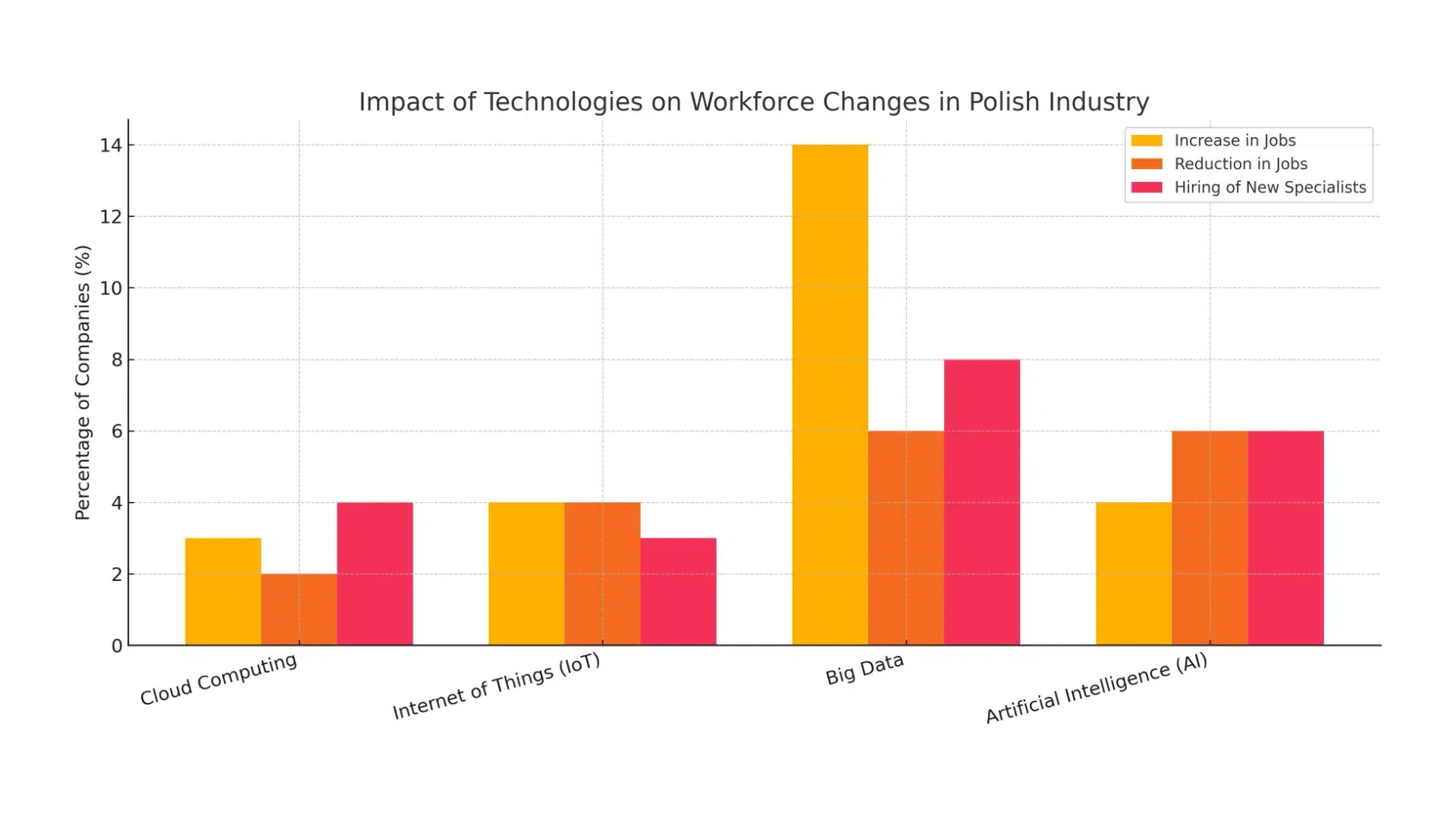

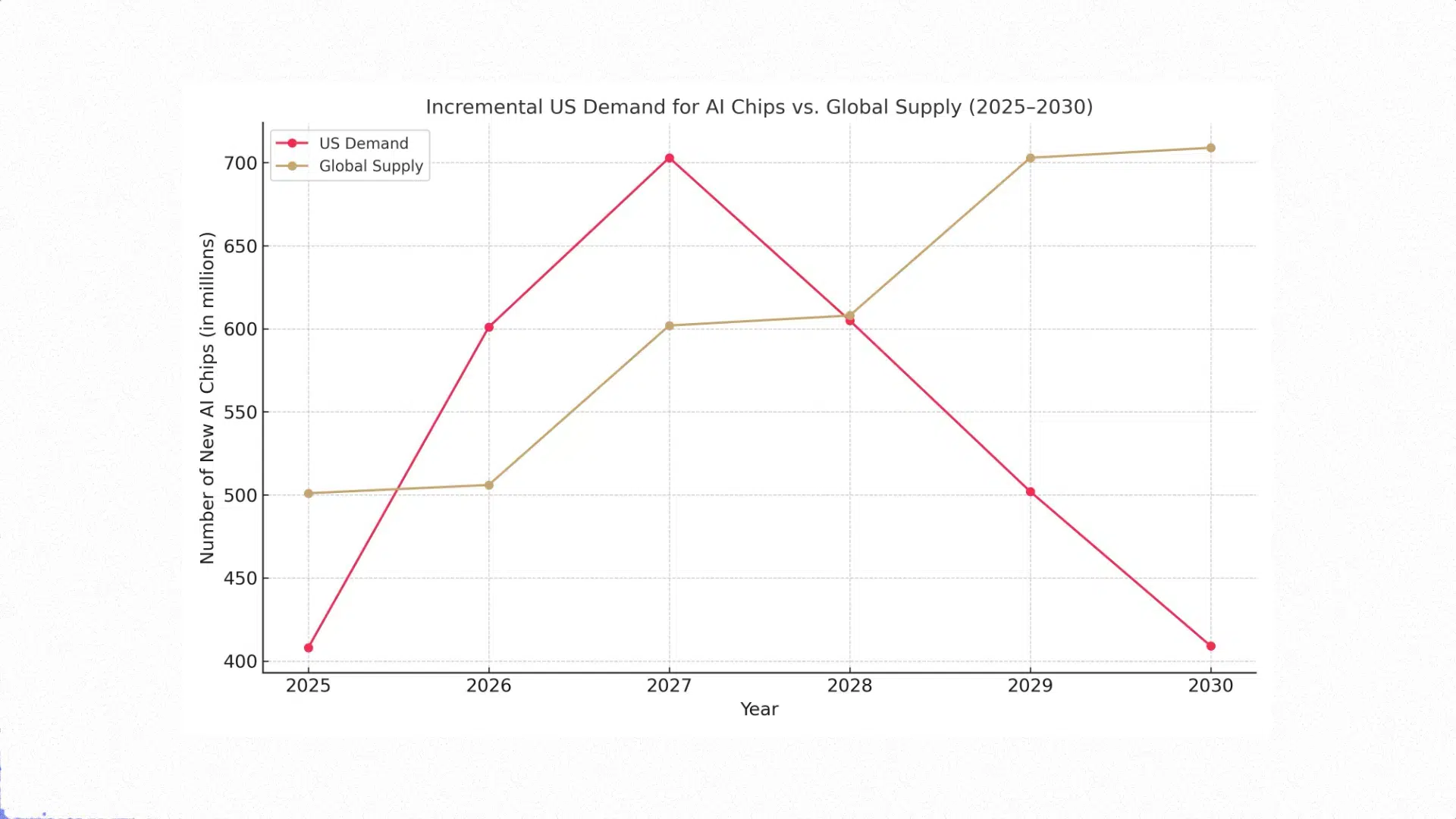

This solid growth of a few per cent is almost entirely driven by two phenomena: the mass adoption of artificial intelligence and the need to upgrade existing systems.

The IT services segment will remain the largest part of the market, with a projected value of $1.69 trillion, but data centre systems and software will see the greatest growth as a direct result of investment in AI.

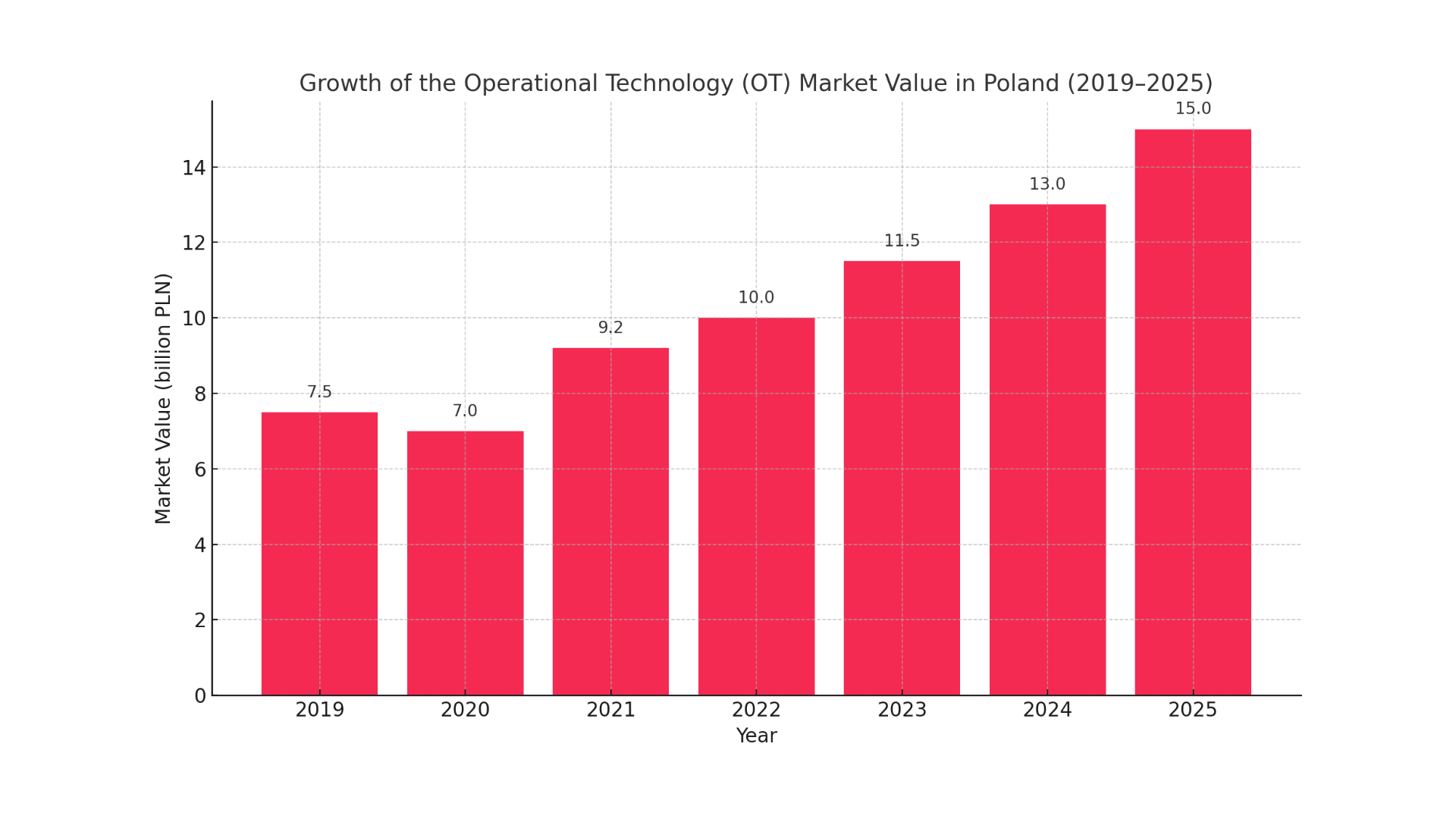

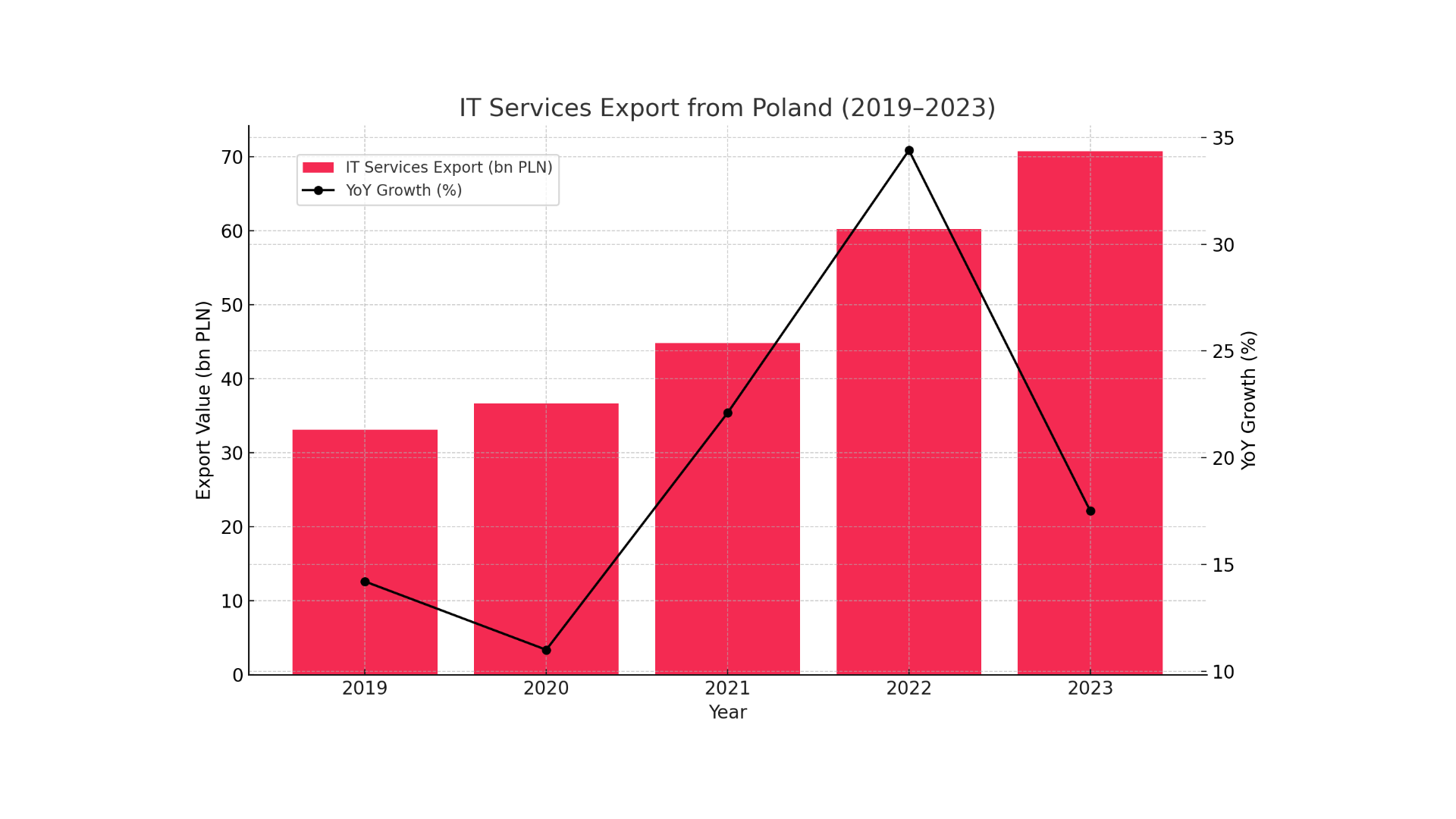

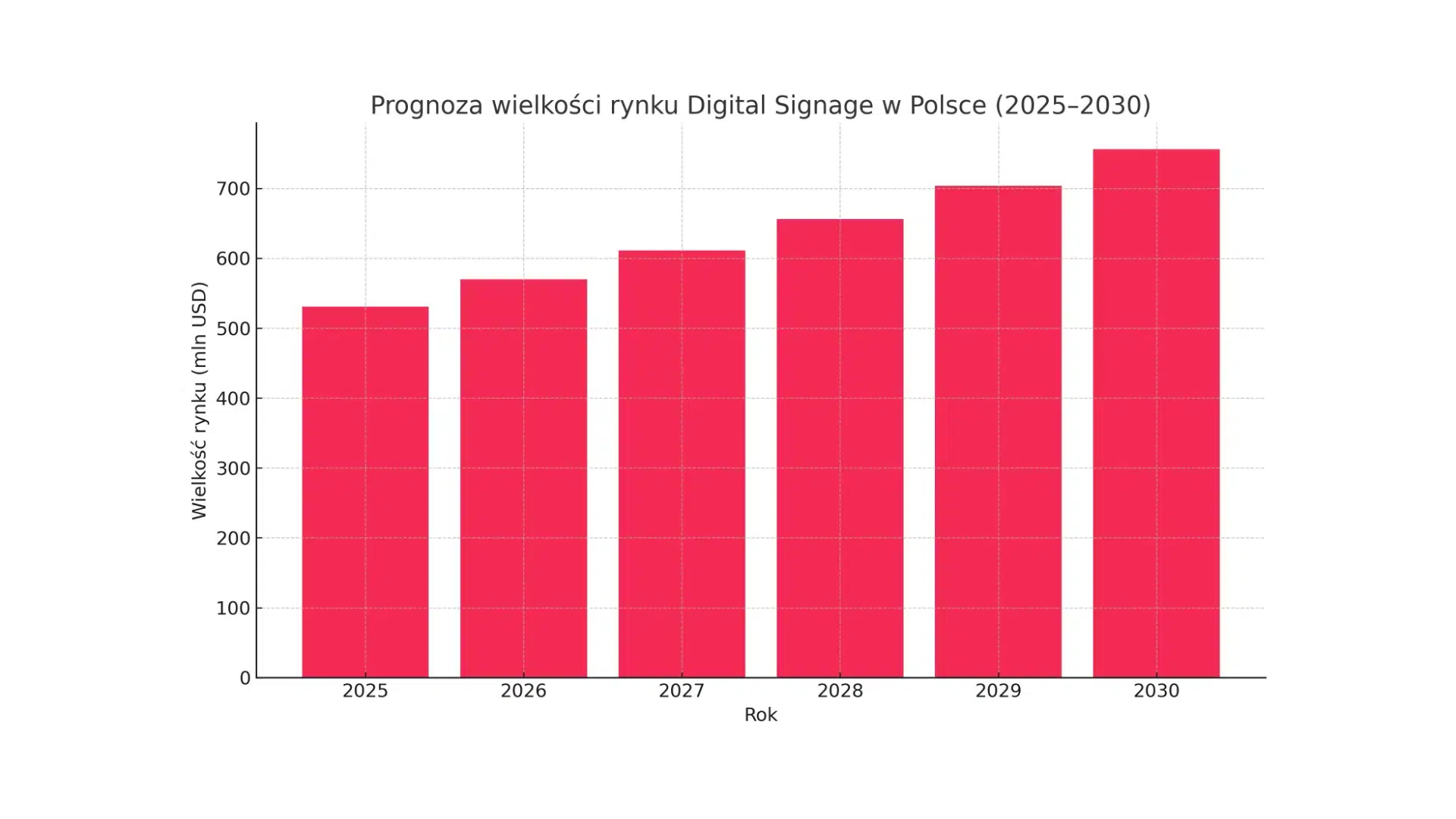

Against this background, Poland is emerging as a regional leader. The value of the Polish IT services market is forecast to reach USD 10.44 billion in 2025, with GDP growth expected to reach an impressive 4.1%. The strength of the Polish market is driven by a globally recognised pool of technology talent, making our country a leading location for nearshoring and outsourcing.

However, this position is a double-edged sword for local IT partners. Huge demand creates a gigantic market, but it also means competition with global players.

In this reality, competing on price alone is becoming a strategy without a future. Advantage must be based on talent, value and specialisation.

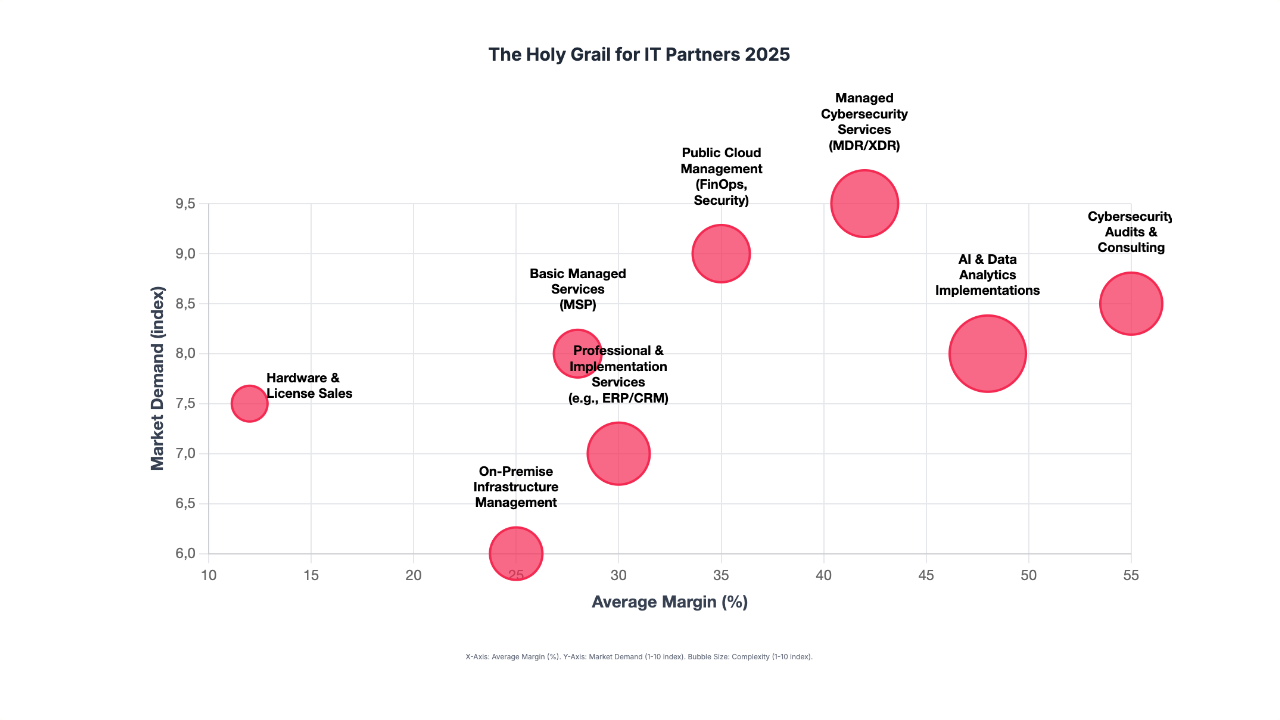

The market analysis clearly identifies three areas where demand, the need for specialisation and margin potential converge, creating ideal conditions for building competitive advantage.

The AI revolution – from experiment to profit

The year 2025 marks the end of the era of free lunches in the AI world. Customers who have invested in pilot projects now expect tangible business results. This creates a huge opportunity for partners who can speak the language of ROI.

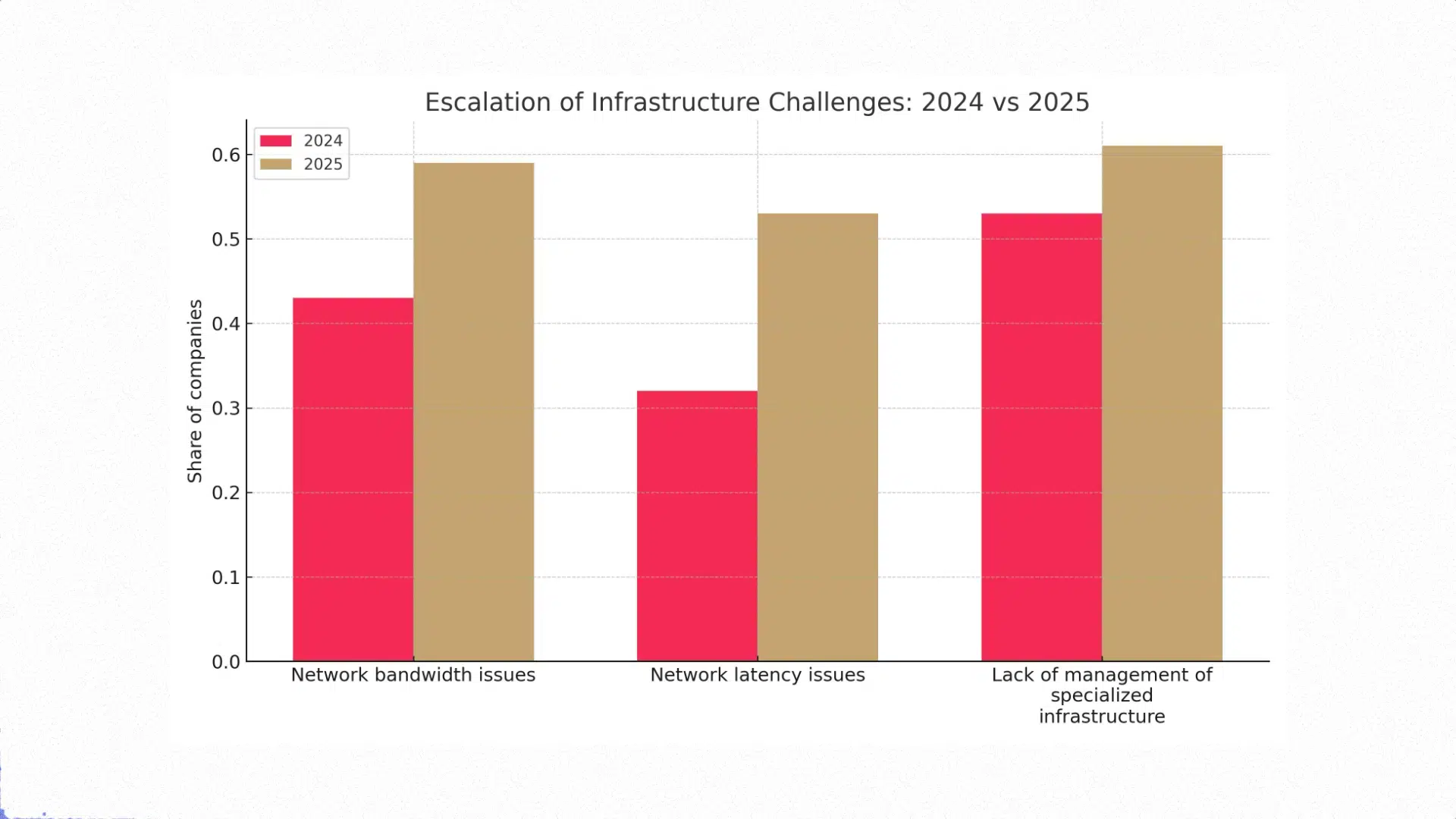

The biggest barriers to AI adoption lie not in the technology, but in lack of competence (42% of companies), insufficient data quality (42%) and lack of a solid business case (42%). It is in these areas that an AI partner can offer the most value.

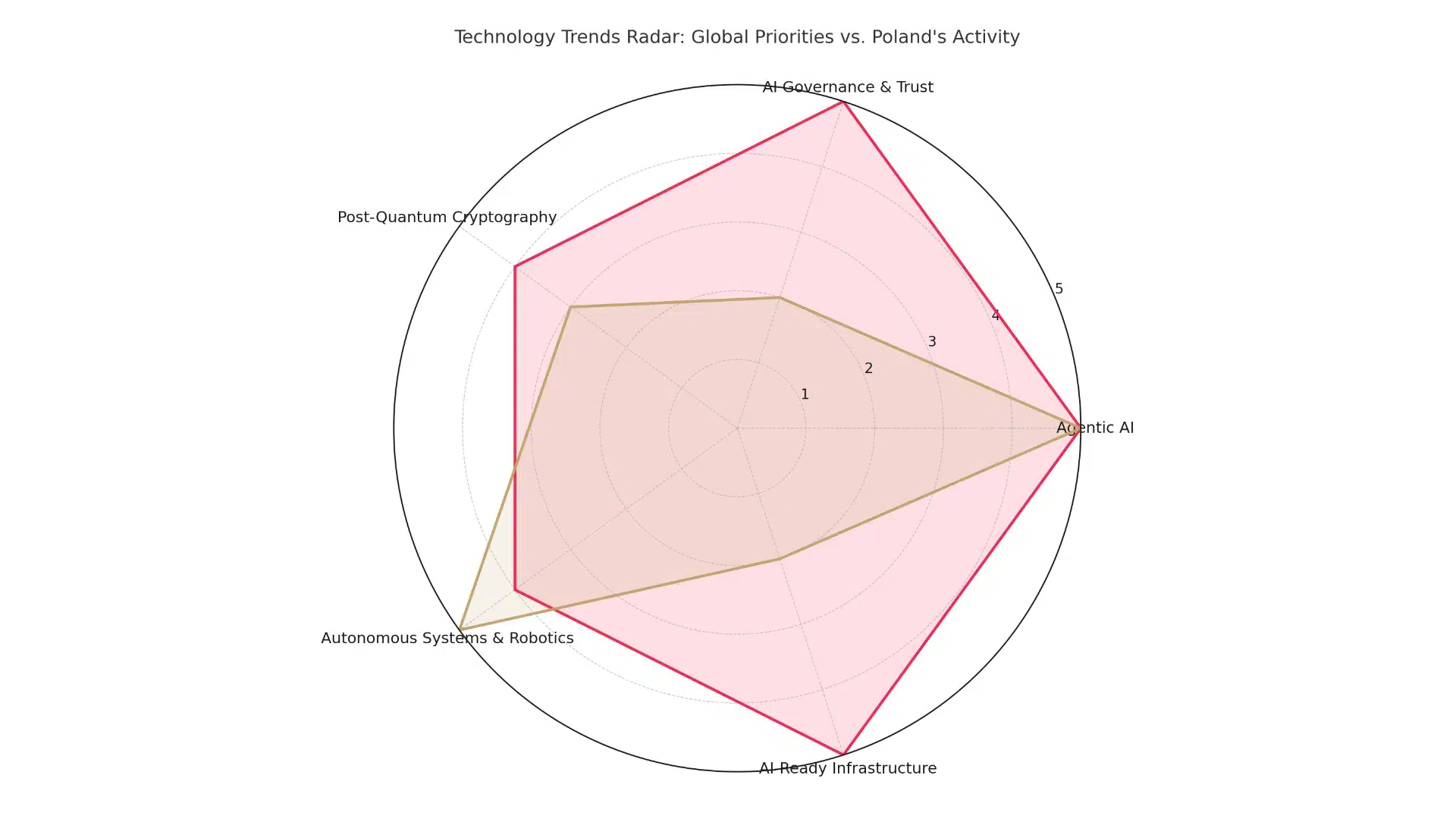

Instead of generic implementations, the most profitable services focus on solving complex problems. AI governance (AI Governance) and ensuring models are explainable are becoming key for firms in regulated sectors, with 44% of organisations planning to invest in them. Instead of offering one-size-fits-all chatbots, partners should focus on creating industry-specific solutions that drive AI adoption in finance, retail or media.

The ability to navigate costs also becomes crucial. The cost of an averagely complex AI project can range from $60,000 to more than $250,000, and a partner who can guide the client through the entire process, from concept to implementation, gains strategic advisor status.

2. Cyber security: from reactor to proactive guardian

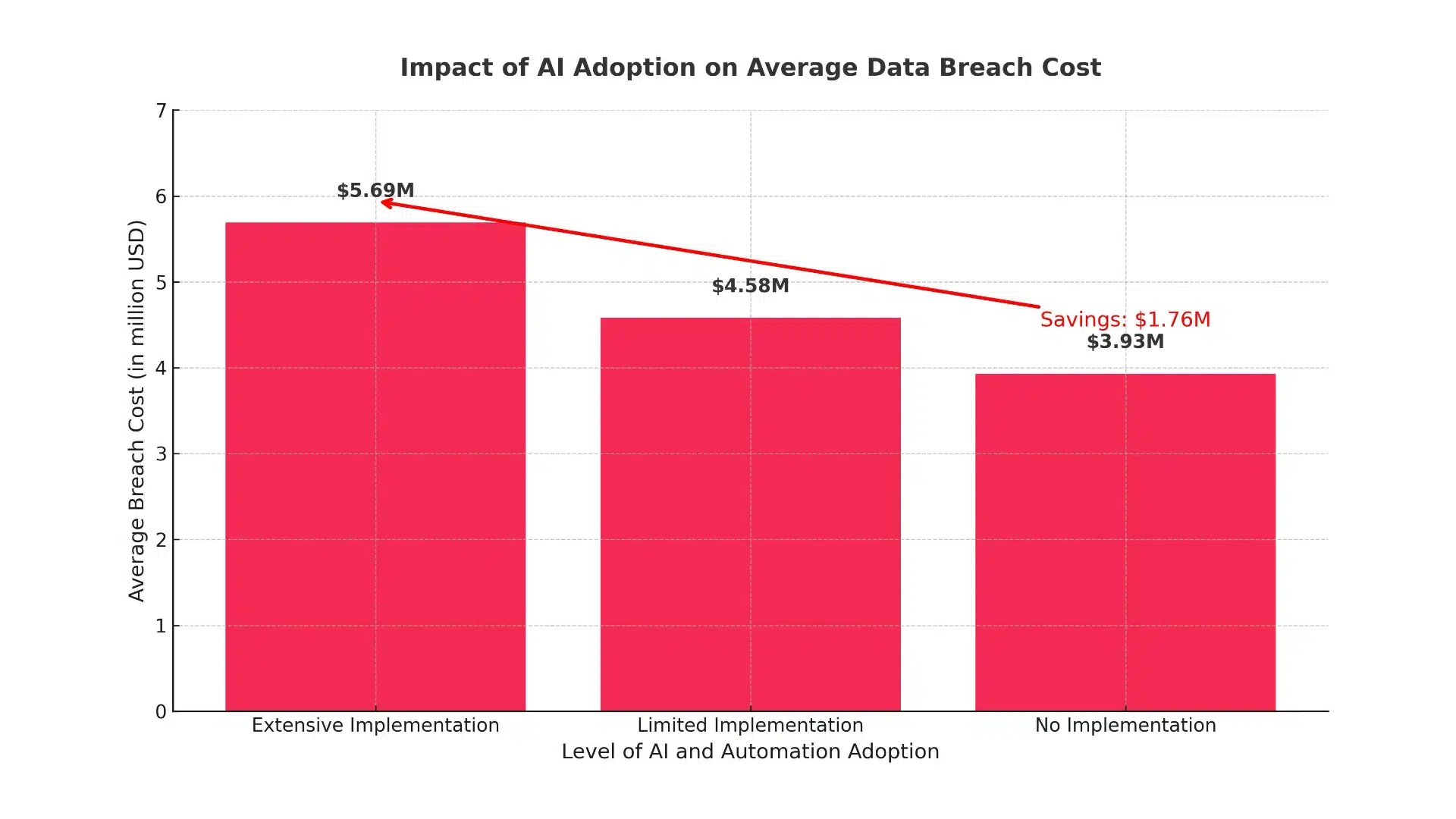

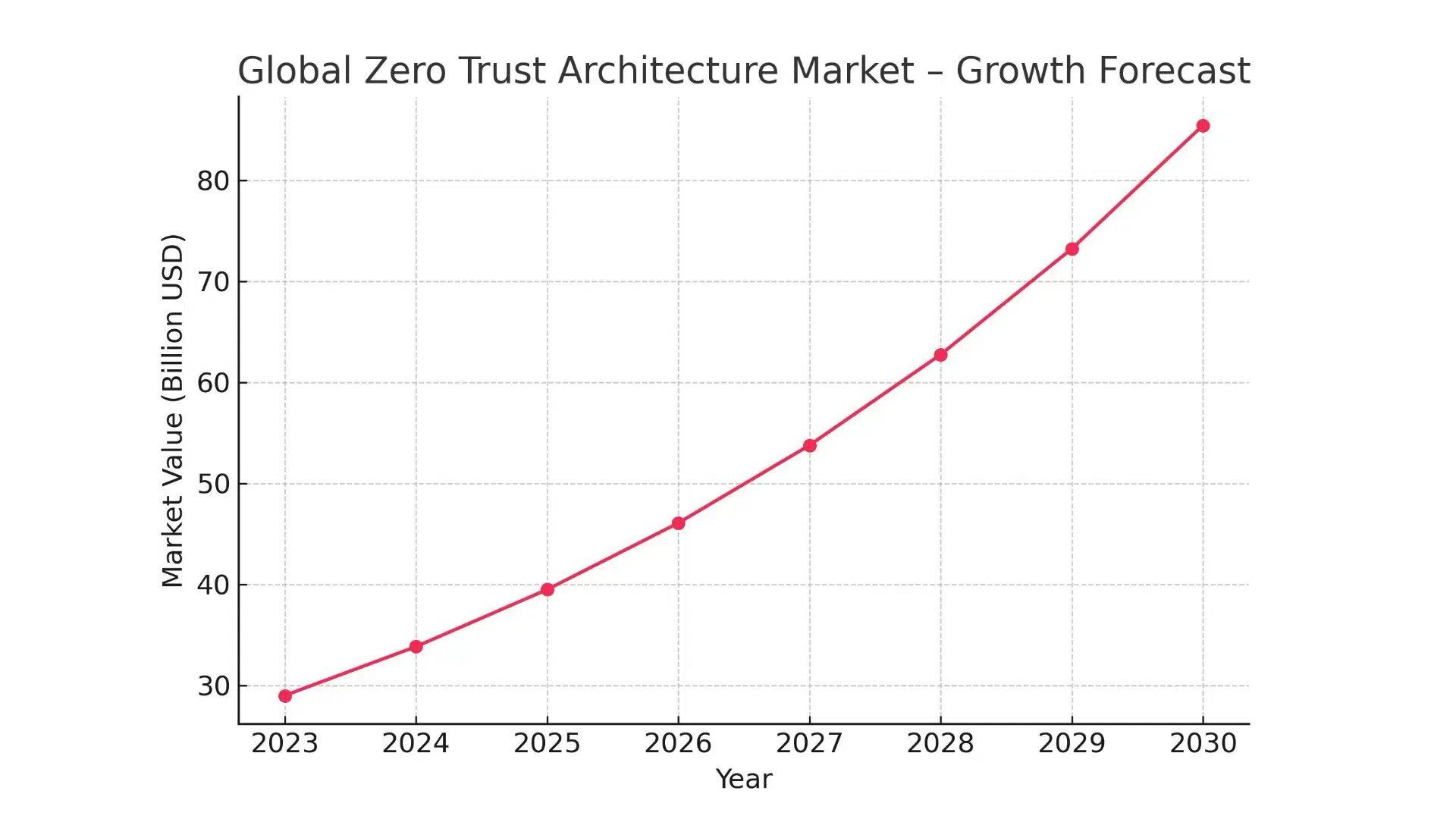

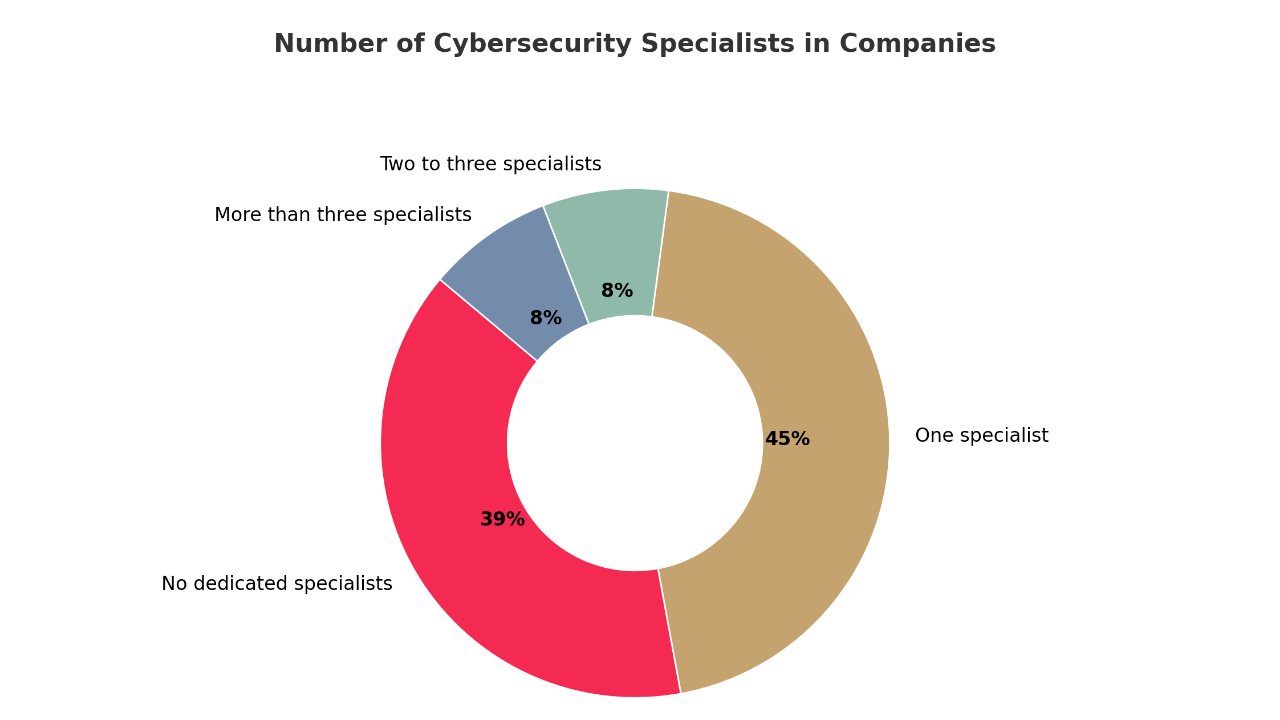

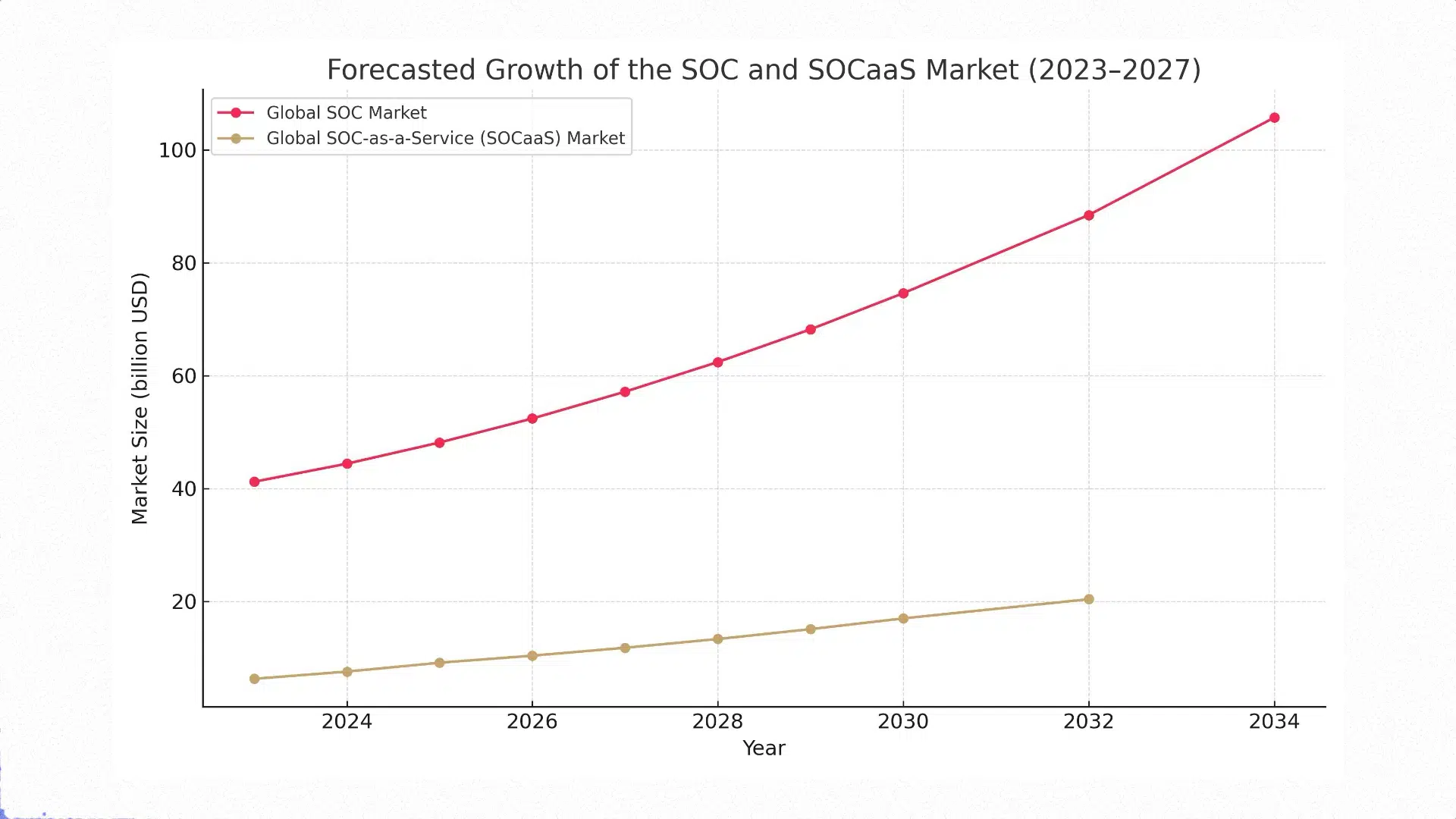

The cyber security market is booming, with a projected annual growth rate of 14.6%. This growth is driven by the increasing complexity of threats, including AI-assisted attacks, and the increasingly severe financial consequences of successful intrusions, with the average cost already reaching $4.88 million.

These services are high-margin, and consulting in this area can yield margins of 20-40% . This is because their value is directly linked to protection against catastrophic risk.

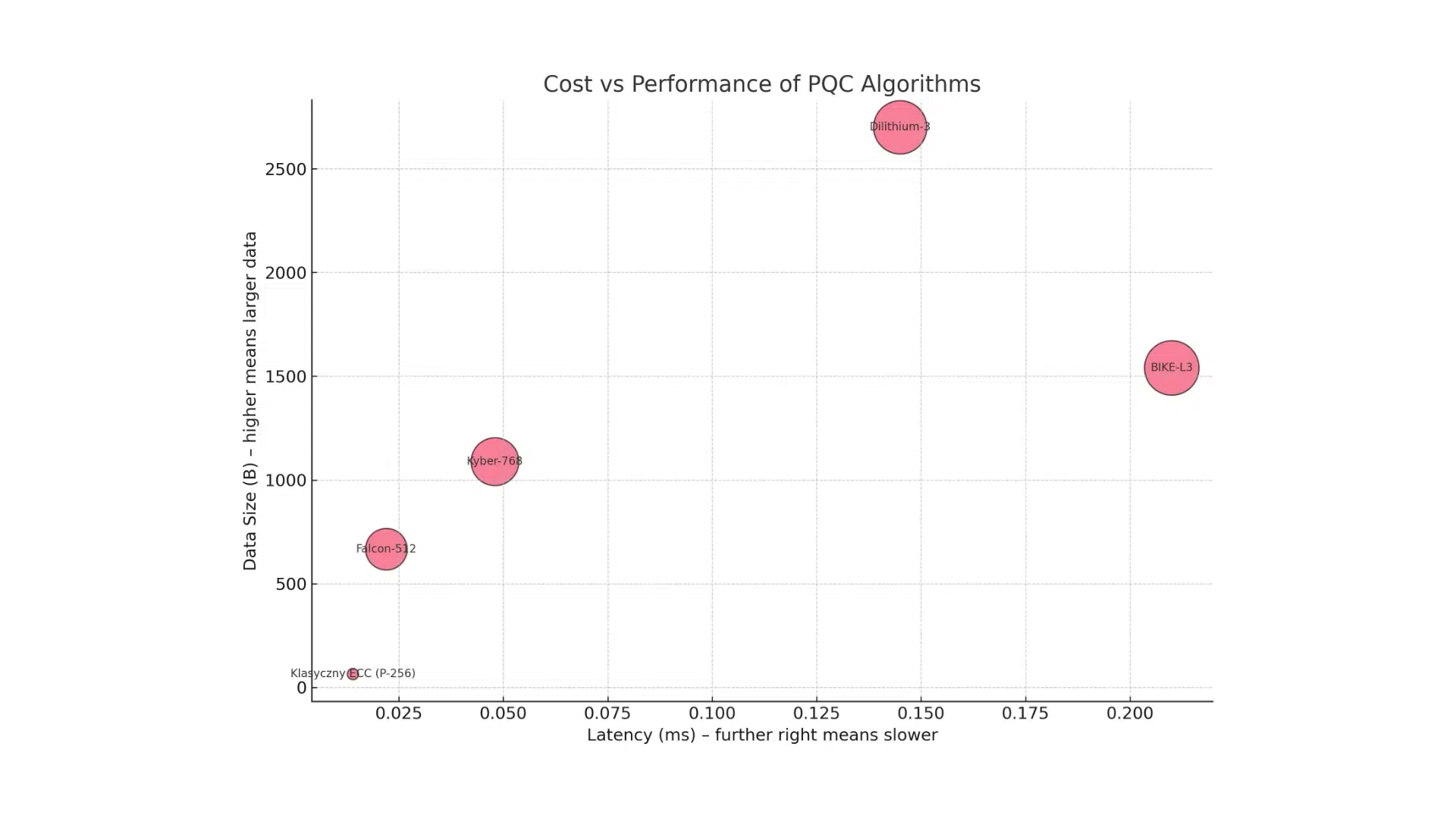

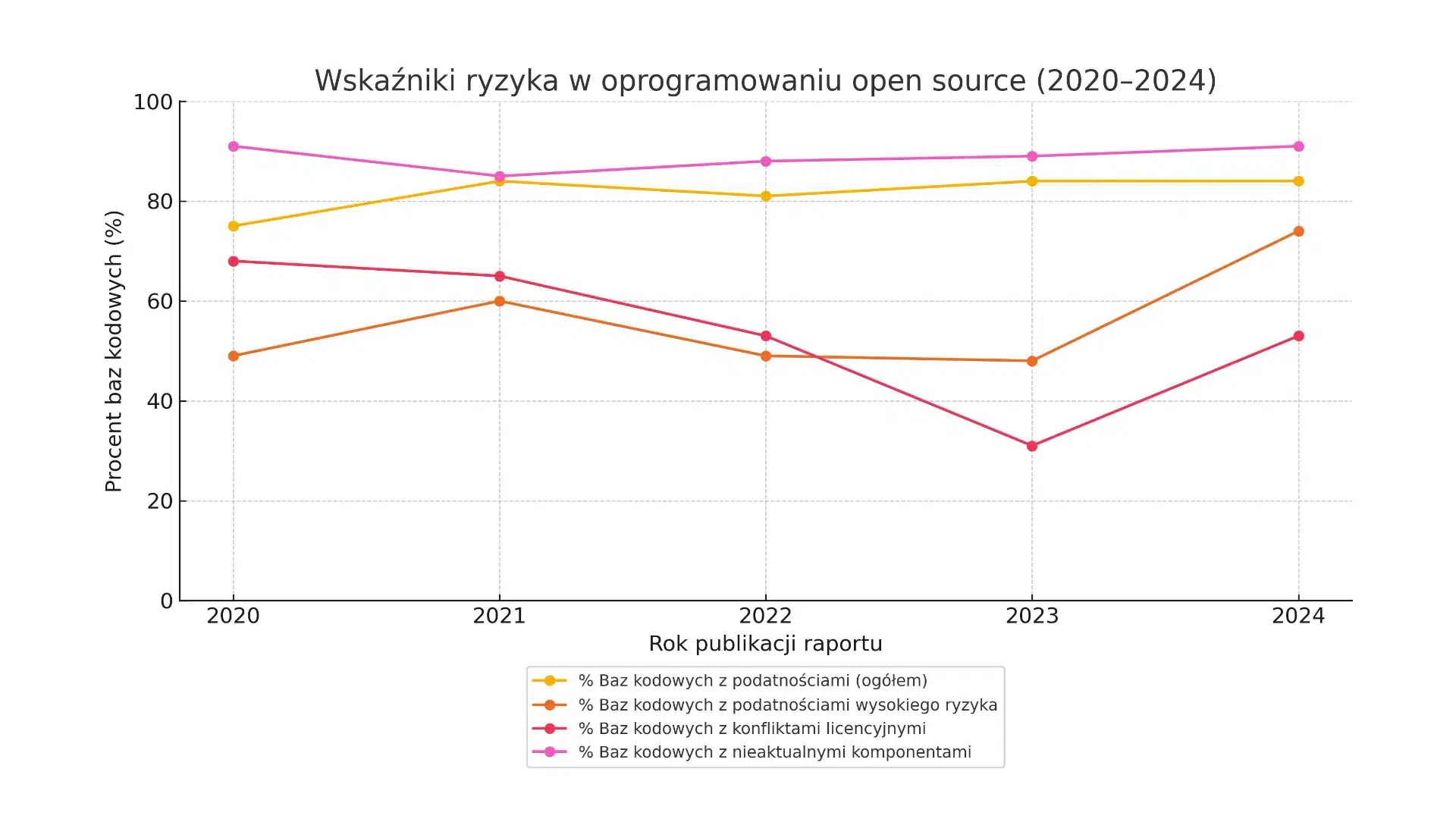

The real growth and margin lies in the move away from standard, saturated services to advanced managed solutions. We are talking about the evolution from Endpoint Detection and Response (EDR) systems to Managed Detection and Response (MDR) and Extended Detection and Response (XDR).

These solutions integrate signals from across the customer’s IT environment and provide 24/7 monitoring and expert response. It is no coincidence that 97% of the world’s top-earning managed service providers (MSPs) offer managed security services.

The democratisation of advanced security operations (SOC) through AI is also a powerful new trend. AI assistants for analysts allow even smaller teams to operate with the efficiency of experienced experts, paving the way for offering a highly effective and profitable SOC-as-a-Service.

3 The economics of the cloud, or the imperative of optimisation

As companies move more and more operations to the cloud, a new fundamental challenge is emerging. For 82% of organisations, managing cloud spend is now the number one concern. It is estimated that up to a third of this spend is wasted due to ineffective configuration or lack of oversight.

This is a huge, easily quantified business pain for which IT partners can offer an effective remedy.

The immediate answer is FinOps, a new operational discipline that brings together finance, engineering and business to bring financial accountability to cloud consumption. The market for FinOps tools and services is growing at more than 11% per year.

This is a high-value consulting practice that can help clients reduce cloud costs by 20-30%. Instead of one-off audits, partners can offer a continuous FinOps-as-a-Service, creating a recurring, high-margin revenue stream.

An additional opportunity is the complexity of multi-cloud environments, which up to 92% of companies are implementing . Partners that can offer a unified management plane for AWS, Azure and GCP environments become invaluable to customers.

Global trends provide the map, but success depends on skilfully navigating the local context. For Polish IT partners, 2025 is a time of strategic decisions. It is necessary to move away from a model of competing mainly on costs to building deep specialisation.

Rather than being an ‘everything provider’, aim to be the best in a selected, high-margin niche, such as implementing regulatory compliance (e.g. DORA), implementing GenAI in a specific industry or consulting FinOps.

The biggest barrier for clients is the lack of talent, so a partner’s most valuable asset is its team of experts . The investment in competence, certification and retraining of talented developers towards AI/ML engineering is the most important investment in future profitability.

Equally important is a change in the way business discussions are conducted. The discussion must shift from the level of technological features to that of business outcomes. It is necessary to learn to quantify the value of the services provided, speaking the language of risk reduction, operational savings and return on investment .

The market analysis for 2025 leads to one conclusion: “The Golden Grail is not a single service, but a strategically balanced portfolio. The partner of the future is one that is specialised, agile and obsessed with delivering tangible value to customers.

A historic opportunity is opening up for Polish companies in the IT sector. Its geographical location, access to outstanding talent and dynamic economy create ideal conditions for a leap forward in global competitiveness.

The key will be to have the courage to invest in the most difficult but also the most promising areas of the market and become an indispensable guide for their customers in the smart technology era.