Imagine two scenarios. In the first, it’s 2003, and the owner of a small manufacturing company looks anxiously at a silent server that has paralysed the ordering system.

In a panic, he calls his ‘IT man’, hoping that he will find time to come and diagnose the problem. Every minute of downtime is a measurable loss.

In the second scenario, it is today. The CEO of a technology company receives a notification on his smartphone. It’s an automated report from his Managed Service Provider (MSP), informing him that a potential vulnerability in the company’s cloud security was discovered and patched overnight, before cybercriminals had time to exploit it.

The company’s operations were not disrupted even for a second.

This contrast perfectly illustrates the fundamental transformation that has taken place in the world of IT services. The evolution of managed service providers is not just a story of adaptation to new technologies.

It is a story of a complete redefinition of the business model, driven by escalating cyber threats, the increasing complexity of cloud environments and the need for automation.

The modern MSP has ceased to be just an external IT department called in to put out fires. It has become a key partner in risk management, an engine of digital transformation and a guardian of business continuity.

Foundations of the past: the era of the “Break-Fix” model

Before IT service providers became proactive partners, the dominant operating model was the so-called ‘break-fix’. Its logic was simple: when something breaks, a specialist is called in to fix it.

The process was purely transactional: the customer experienced a breakdown, the technician arrived, repaired it and invoiced for his time and parts.

The biggest drawback of this model was its fundamental economic structure, which created an inevitable conflict of interest. The IT service provider only made money when there were problems at the client.

The more failures, the higher the provider’s profits. The customer sought maximum stability, while the provider’s business model depended on instability.

This structural flaw prevented the building of relationships based on trust and had to give way as soon as companies understood that their survival depended on reliable technology.

Proactive breakthrough: the birth of the modern SME

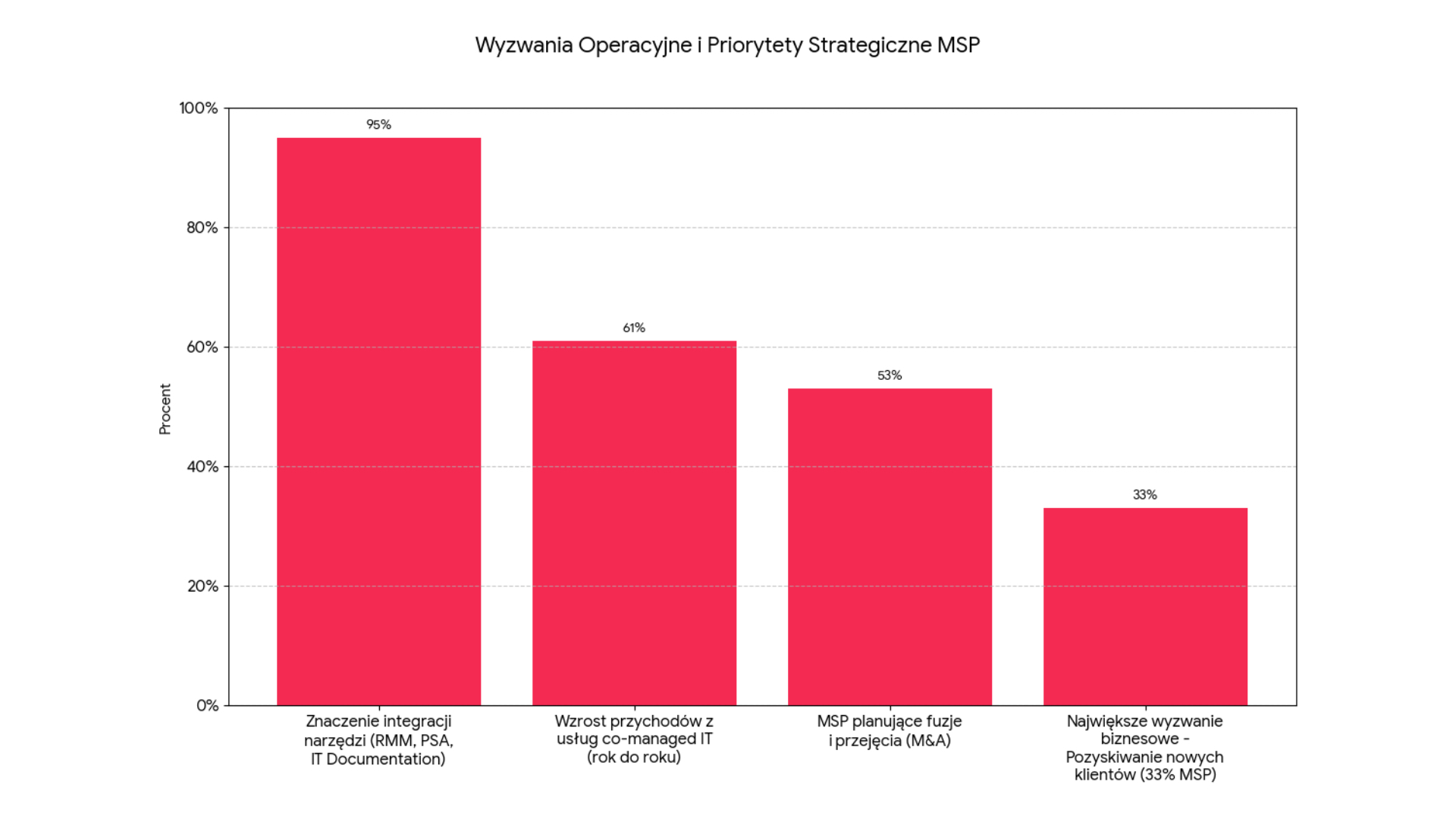

The twilight of the ‘break-fix’ era has been accelerated by technologies that have enabled fundamental change. Remote monitoring and management (RMM) and professional services automation (PSA) platforms have catalysed the revolution.

RMM tools allowed suppliers to continuously monitor the health of customer systems in an automated manner, enabling issues to be identified and resolved before they led to downtime.

The most important innovation, however, was a change in the business model. MSPs moved away from hourly rates to a fixed monthly subscription fee (Monthly Recurring Revenue, MRR).

For the customer, this meant cost predictability and for the SME, a stable revenue stream. The introduction of service level agreements (SLAs) gave customers contractual guarantees on response times or system availability.

Most importantly, this model has united the interests of both parties. The MSP’s profitability became directly proportional to the stability of the client’s IT environment. Each failure was now a cost to the provider, rather than an opportunity to make money, motivating the provider to ensure maximum efficiency.

The cyber security imperative: from administrator to defender

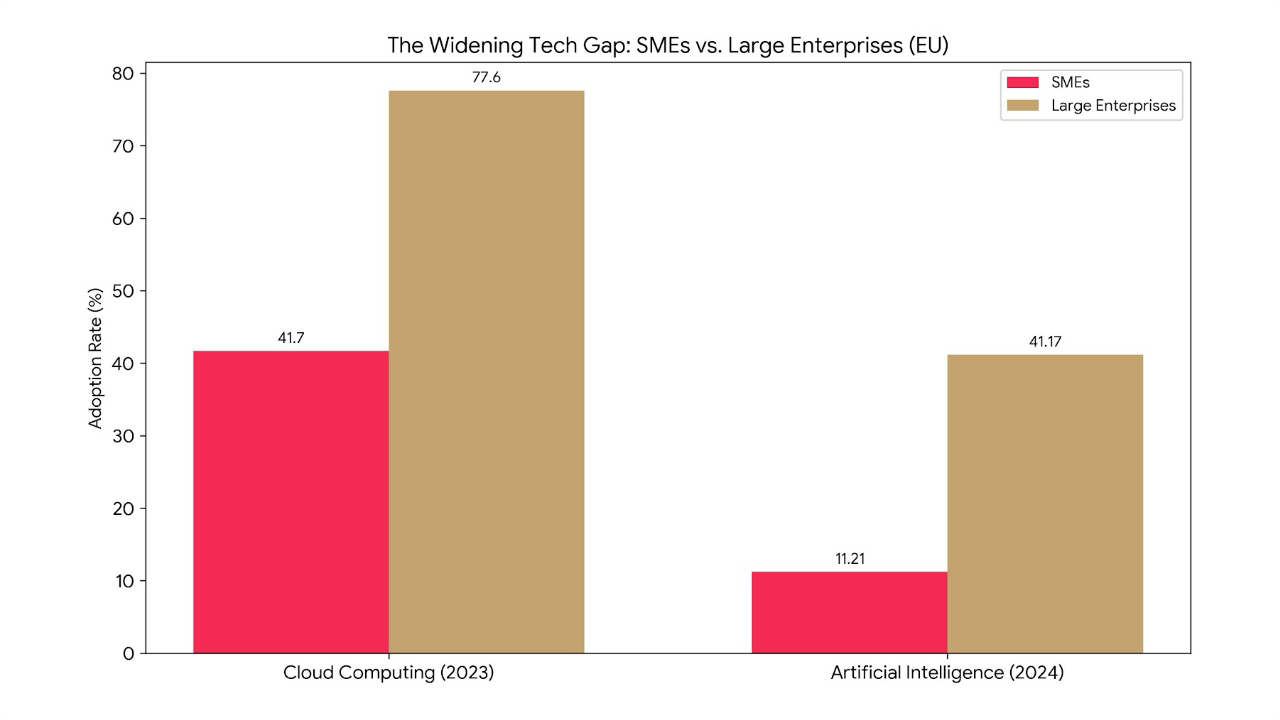

If proactivity was the spark that started the revolution, the explosion of cyber threats has become the fuel that drives further evolution. Small and medium-sized enterprises (SMEs) have become a prime target for cybercriminals, and the fear of attack has become one of the top business priorities.

Research from 2024 revealed that as many as 78% of SME companies fear that a major cyber-attack could bankrupt them.

In response, cyber security has ceased to be an add-on and has become central to the MSP’s offering and a key driver of revenue growth.

Market analysis shows that 97% of the highest revenue MSPs offer a wide range of managed security services. Clients are no longer just looking for tools; 64% expect strategic guidance from their MSP.

This has forced providers to evolve towards a managed security service provider (MSSP) model, offering advanced solutions such as managed detection and response (MDR), security information and event management (SIEM) and security awareness training.

By taking responsibility for cyber security, the MSP has fundamentally changed its role – it no longer just manages the technology, but the customer’s business risk.

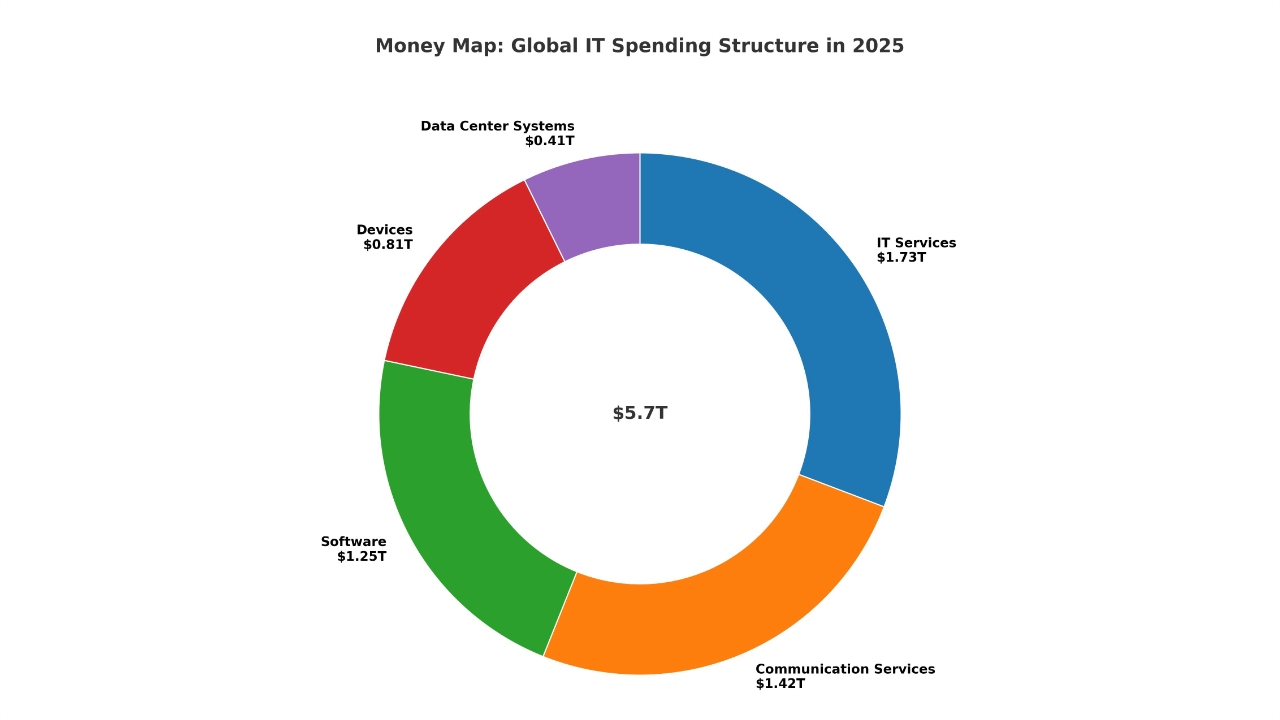

The cloud revolution: managing hybrid complexity

Contrary to early predictions, the growth of public clouds has not made MSPs redundant. On the contrary, the mass adoption of hybrid and multi-cloud (multi-cloud) strategies has created an intense new level of complexity that companies have been unable to cope with on their own.

This has opened up a huge opportunity for mature MSPs. They have transformed themselves into cloud strategists and integrators, helping clients develop strategies, implement complex migrations and, crucially, optimise cloud costs (FinOps).

In an era of increasing data privacy regulation, MSPs have also started to act as a ‘data sovereignty broker’, advising on where data can and should be stored to comply with regulations.

The ability to design and manage a fully customised hybrid environment, combining on-premises resources with private and public cloud, has strengthened the MSP’s position as a central coordinator of the client’s entire IT ecosystem.

Innovation horizon: AIOps and Hhperautomation

The most mature MSPs today stand on the threshold of the next evolutionary leap, whose horizon is marked by AIOps (AI for IT Operations) and hyper-automation. AIOps uses big data and machine learning to automate and streamline IT operations, moving management from proactive to predictive.

Instead of reacting to known potential problems, AIOps predicts and prevents them before any symptoms become apparent.

Practical applications include intelligent correlation of thousands of alerts into a single usable incident, predictive analytics that forecast future resource requirements and automated remediation that resolves repetitive problems without human intervention.

Combined with hyper-automation, which streamlines entire business processes (e.g. implementation of new customers), these technologies become a key competitive advantage.

AIOps is becoming a prerequisite for managing modern, complex IT environments, and vendors who successfully implement these technologies will be able to serve more demanding customers with greater efficiency.

An essential engine for digital transformation

The evolution of managed service providers is a story of remarkable adaptation and continuous climb up the value chain. From a reactive technician whose success was measured by the speed of repair, to a predictive, strategic partner whose value is defined by its contribution to the innovation, resilience and profitability of the client’s business.

The MSP of the future is not a technology vendor, but a consultancy with deep technical expertise. It thrives in an environment of complexity, actively manages risk and uses intelligent automation to deliver measurable results.