For years, the relationship between the CIO and CFO resembled a long-established marriage, communicating mainly through laconic notes left on the fridge. The CIO would ask for budgets for ‘solutions that no one but him understands’, and the CFO would respond with a question about cost optimisation, treating the server room as a necessary evil – an expensive black box that would be best moved entirely to the cloud and forgotten about.

This model is about to become history. The latest Deloitte report, based on a survey of leaders from more than 500 US corporations, leaves no illusions: a financial tsunami is coming that cannot be waited out in a silo.

The projected tripling of AI infrastructure budgets by 2028 is the critical moment when the technology becomes too expensive, too energy-intensive and, most importantly, too strategic to leave its oversight solely in the hands of engineers. When spending on computing power quadruples in a few years, it ceases to be an issue for the IT department and becomes a matter of sovereignty and survival for the entire organisation.

Blurring boundaries is a painful but fascinating process. The CFO spreadsheet and the CIO hybrid architecture diagram are no longer two different documents. It’s time to abandon translators and diplomatic protocols – the leaders of tomorrow must become bilingual, because a communication error between the ‘boardroom floor’ and the ‘server room’ could cost a fortune.

Financial culture shock

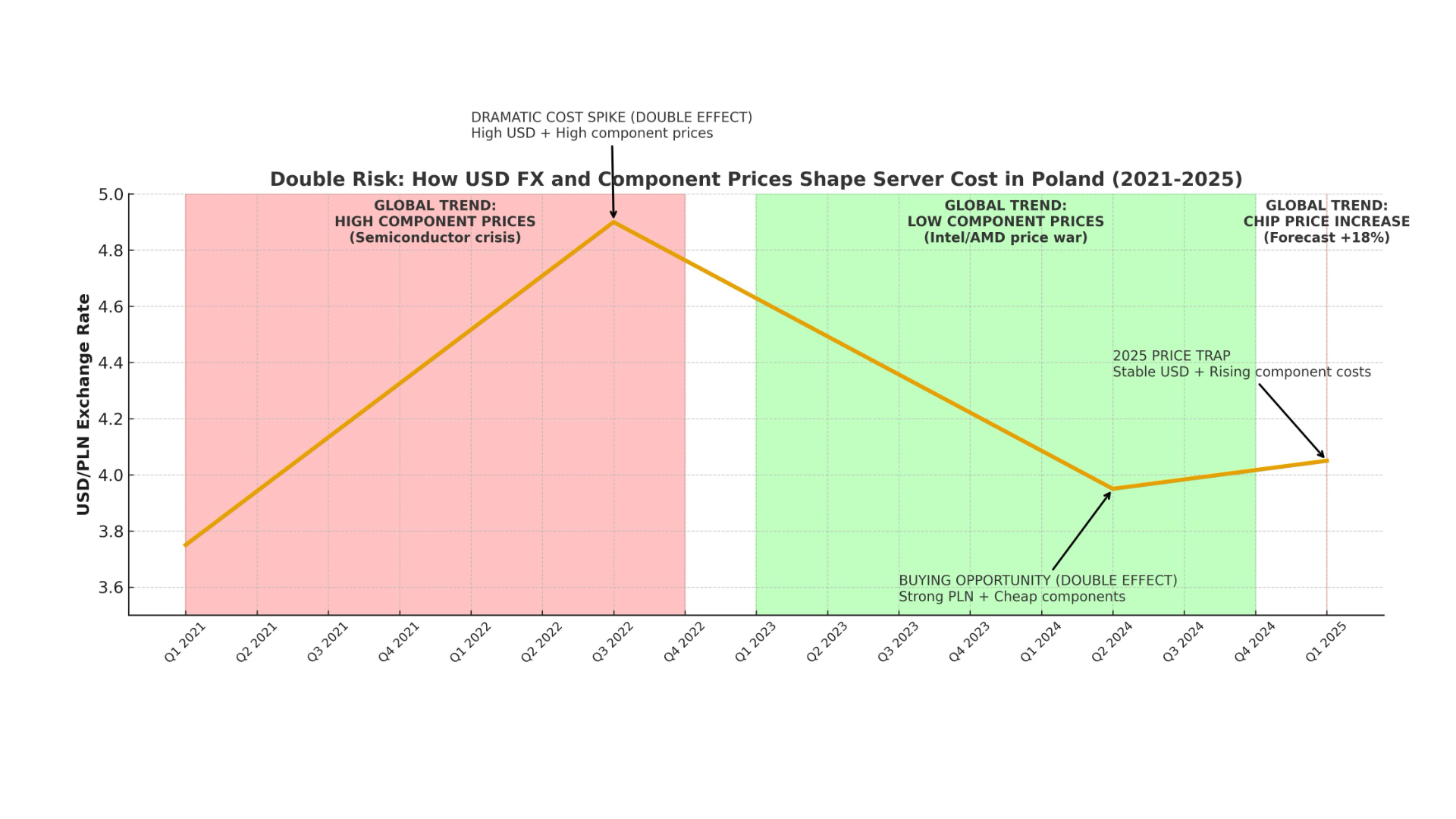

For the past decade, the mantra of CFOs has been ‘OpEx above all else’. The public cloud was supposed to be the cure-all for all evil – a flexible cost that could be scaled up or down, avoiding the costly maintenance of in-house ‘server housing’. However, artificial intelligence, with its insatiable appetite for computing power, is brutally verifying this optimism.

There is a clear conclusion from the Deloitte report: the traditional IT spending model, based on one-off upgrade spurts, is becoming a thing of the past. Instead of cyclical ‘fleet replacement’ projects, IT departments are moving to a model of constant, high and growing annual spending. After all, AI is not a sprint after which you can rest; it is an arms race in which the fuel – i.e. computing power – gets more expensive with each new deployment.

Interestingly, we are seeing a fascinating twist: the return to favour of the CapEx model. Companies that not long ago were aiming for total ‘hardwarelessness’ are now queuing up for their own GPUs and TPUs. Why? Because at the scale Deloitte is talking about – where the amount of tokens being processed is doubling every year – renting ‘power’ in the cloud is simply becoming economically inefficient.

For CFOs, this is a real culture shock. They have to accept that having their own physical AI infrastructure becomes a strategic asset, not just an operational ballast. An in-house hybrid server room becomes an insurance policy for the future. Companies stop asking ‘how much is it going to cost us this month’ and start calculating how much computing power they need to own so that their models don’t get stuck in a queue at hyperscalers.

The ’30 pilots’ trap, or where the money is running away

The figure of ’30 pilot projects’ sounds impressive in an annual report and looks great on shareholder slides. However, for the CIO-CFO duo, this statistic is first and foremost a wake-up call. Deloitte indicates that by 2028, almost 70% of companies will be conducting such extensive AI trials. The problem is that, with soaring infrastructure costs, spreading resources across thirty different fronts is a straightforward way to cultivate so-called ‘innovation theatre’.

There is a lot going on in this model, dozens of prototypes are being developed, but none of them get beyond the experimental phase to realistically feed into the profit and loss account. With giants such as Anthropic reserving gigawatts of power for years ahead, smaller players have to demonstrate downright surgical precision in resource allocation.

This is where the new role of management manifests itself: The CIO and the CFO must jointly act as ‘silicon guardians’. Their job is no longer just to check that the budget is closing, but to build an absolute hierarchy of importance. Each of the 30 pilots should pass the sieve of a hard ROI analysis: does this model really optimise the process or is it just a technological curiosity?

Any decision to allocate resources to a particular project is a de facto decision about which area the company wants to gain a competitive advantage in and which it is letting go. The real art of management in 2028 will not be how many AI projects can get off the ground, but how many of them can be killed off early enough for the most promising ones to have something to work on.

New business grammar: Tokens instead of man-hours

“The boundary between business and technology isn’t just blurring – it’s ceasing to exist” – these words from Chris Thomas of Deloitte should be engraved above the entrance to every modern conference room. The traditional grammar of business, based on man-hours, licences per user or the number of ‘seats’ in a CRM system, is giving way to a new currency: tokens.

For CFOs, understanding what a token is and how it affects the balance sheet becomes as critical as analysing operating margins. Tokens are the blood in the veins of AI models, and their volume directly translates into computing power requirements. If, as the report predicts, their volume in corporate processes is set to double or triple in the next three years, then the infrastructure discussion is no longer a debate about ‘buying hardware’. It is a debate about the capacity of the entire enterprise and its ability to generate value.

In this new hand, AI infrastructure is being promoted from the role of a quiet back office to that of a major actor on the frontline of the battle for customers. Companies that are able to effectively manage their own ‘computing portfolio’ – skilfully combining closed, open and proprietary on-premise models – are gaining a flexibility that competitors relying solely on off-the-shelf SaaS services can only dream of.

Strategic advantage in 2028 will not come from having the best marketing slogans, but from optimising the cost of generating a single intelligent operation. Infrastructure becomes the foundation of innovation: it determines how quickly a company can implement new functions and how deeply it can automate its structures. He who controls access to processors and optimises their use de facto controls the rate at which his business can grow. This is the new economy of scale, in which hardware becomes the hardest of the hard currencies of business.