The initial phase of the AI revolution solidified a simple and clear picture of the market. At the top was Nvidia, providing the technological ‘shovel’ in the form of GPUs, with cloud giants Amazon, Microsoft and Google just below offering access to ‘goldfields’ of computing power.

However, the latest financial results from traditional hardware vendors such as Dell and HPE show that this picture was incomplete. The centre of gravity in the key enterprise segment is beginning to shift towards private infrastructure, signalling that the market is entering a new, more mature phase where control, security and cost are becoming the highest denomination currency.

The hard financial figures leave no illusions. Dell’s server and network segment grew by an impressive 69% in the last quarter, an absolutely exceptional result in such a mature sector.

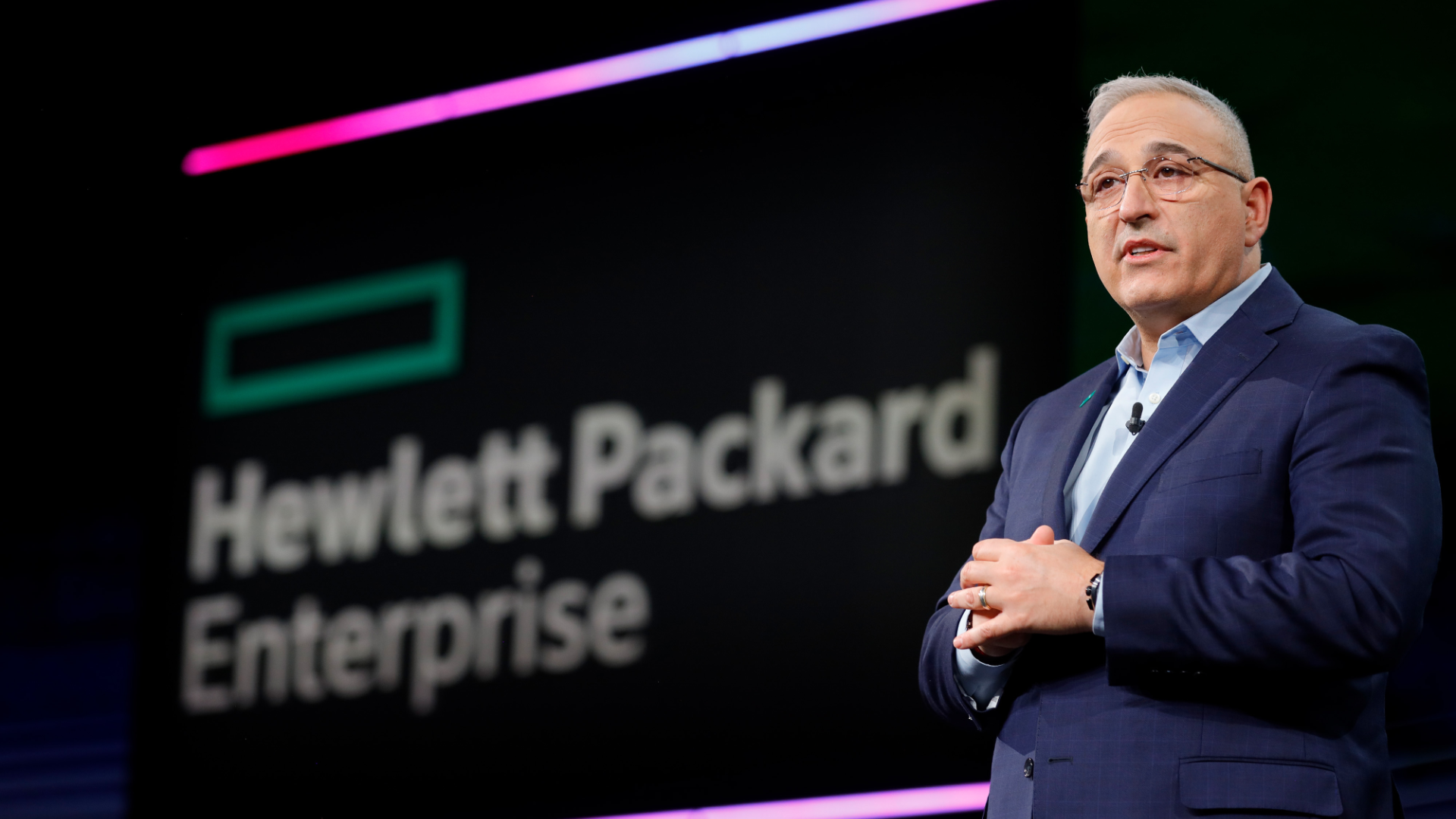

This jump translated into record revenues across the company of $29.8bn. At the same time, Hewlett Packard Enterprise reports that AI-dedicated systems generated $1.6bn in revenue, and its entire server segment grew solidly by 16%.

We’re not talking about selling standard machines. We’re talking about advanced, high-margin systems, saturated with the latest GPUs, ultra-fast interconnects and huge amounts of memory.

They are the driving force behind these increases and are a clear indication of where companies are now placing their largest technology budgets.

Behind this fundamental market shift is primarily a pragmatic calculation and strategic course correction. The ‘cloud-first’ model that has dominated IT thinking for the past decade is evolving towards a more sustainable ‘cloud-smart’ approach or, to put it simply, towards a hybrid architecture.

While the public cloud remains an indispensable environment for rapid prototyping, experimentation and scaling of variable workloads, large-scale production AI deployments have highlighted its structural limitations.

The motivation to invest in one’s own equipment is based on three pillars that have become critical.

Firstly, the issue of data security and sovereignty has come to the fore. In an era of regulations such as RODO in Europe, the processing of sensitive corporate data – be it intellectual property, financial data or customer information – on external, shared infrastructure raises legitimate and often unacceptable risks.

For many industries, from finance to healthcare, the ability to physically control data is not an option, but a legal requirement. The concept of ‘data gravity’ is becoming a reality: it is easier to attract computing power to massive corporate datasets than to transfer petabytes of information to the cloud.

Secondly, businesses have begun to look closely at total cost of ownership (TCO). While the initial capital outlay to purchase their own servers is high, the operational cost of renting cloud resources to support sustained, intensive AI workloads can be astronomical and unpredictable in the long term.

For companies that train and operate models continuously, having their own infrastructure offers much better financial predictability and a lower cost over a 3-5 year cycle.

Thirdly, performance and personalisation requirements cannot be ignored. Latency-sensitive AI applications, crucial in industrial automation, autonomous vehicle systems or banking, require millisecond processing.

Even the minimal latency associated with transferring data to and from the cloud can be unacceptable in such scenarios. Proprietary hardware also allows for deep optimisation and customisation of the entire architecture – from hardware to software – to the specific needs of the model, which is often impossible in standardised cloud environments.

In this new landscape, Dell and HPE find themselves perfectly placed. Their advantage is not just in the technology, but in the deep understanding of the enterprise market that they have built up over decades.

It is not just commercial relationships, but knowledge of procurement cycles, the ability to provide global technical support (SLA) and experience in integrating new solutions with existing complex IT systems. What’s more, they hit exceptionally fertile ground.

It is estimated that up to 70% of servers in companies are older-generation hardware, which is not only insufficient for AI tasks, but also extremely energy inefficient. The pressure to upgrade is therefore twofold: on the one hand, the need for power; on the other, rising energy costs and sustainability goals (ESG).

The artificial intelligence market is entering a new, more sustainable phase. This does not mean the end of the cloud, but a redefinition of its role as one of the key elements in a broader, hybrid strategy. The experimentation phase is coming to an end and the time for strategic, long-term deployments is beginning.

In this game, it is the providers that can offer security, performance and cost predictability in their own data centre that are taking the lead.