There are moments in the history of technology that redefine the limits of possibility. The mastery of fire, the invention of printing, the digital age – each of these eras was sparked by a fundamental discovery.

Today we stand on the threshold of another such transformation, which is not simply the evolution of computing power, but the birth of an entirely new paradigm. We are talking about quantum computing.

The race to build a functional quantum computer is the most important technological and geopolitical duel of the 21st century.

At stake is the ability to solve problems that are today beyond the reach of the most powerful supercomputers – from designing drugs at the molecular level, to creating revolutionary materials, to breaking almost all modern encryption systems.

At the heart of this revolution is quantum mechanics, with its principles of superposition and entanglement, which allows a qubit – the quantum equivalent of a bit – to exist in multiple states simultaneously. It is this fundamental difference that gives quantum computers their unimaginable potential.

The year 2025, declared by the UN as the International Year of Quantum Science and Technology, is a symbolic turning point . We are no longer in the realm of purely theoretical considerations. We have entered the NISQ (Noisy Intermediate-Scale Quantum) era – a time when we have imperfect, ‘noisy’ quantum computers, but which are becoming more powerful every year.

It is a nascent industry, estimated to be worth US$866 million in 2023 and projected to reach US$4.4 billion by 2028.

Great quantum families: contenders for the crown

Three powerful players have emerged on the battlefield for the quantum future: Google, IBM and Microsoft. Each has a different strategy to sit on the technological throne.

Google: the alchemists of Mountain View

Google’s strategy focuses on spectacular, breakthrough demonstrations of power. Their latest weapon is the ‘Willow’ processor, but the real breakthrough lies not in the number of qubits, but in the mastery of error correction.

Google engineers have announced that they are able to maintain the stability of a logical cubit – that is, a set of physical cubits working together to correct errors – for up to an hour.

This is a monumental leap compared to the microseconds that were the standard not so long ago. Their claim to the throne is based on being the first to push the boundaries of science, as in 2019 when they were the first to announce the achievement of ‘quantum supremacy’.

IBM: kingdom builders for all

IBM is playing a very different game. Instead of isolated breakthroughs, they are betting on consistent progress and democratising access to technology. Their roadmap is public and precise, and they plan to make the ‘Nighthawk’ processor available in 2025.

A key element of their strategy is to integrate quantum computers with classical supercomputers (HPC), creating a hybrid future. The opening of Europe’s first quantum data centre in Germany is a strategic move, bringing quantum resources directly into European industry and academia.

IBM is not just building a lab experiment; it is creating a business-ready platform accessible through the cloud.

Microsoft: patient architects in Redmond

Microsoft took the path of highest risk, but also potentially highest reward. For decades they had invested in research into the mythical ‘topological cubit’, which would be inherently fault-tolerant.

While waiting for this technology to mature, they built the powerful Azure Quantum ecosystem, designed to be independent of any particular hardware architecture . Their latest breakthrough is a demonstration of 12 entangled logical qubits with an error rate 800 times lower than single physical qubits, achieved in collaboration with Quantinuum.

The partnership with Atom Computing aims to build “the world’s most powerful quantum machine”, combining their error correction software with promising technology based on neutral atoms .

The geopolitical great game: the dragon versus the eagle

The rivalry is moving into the global arena, where it is becoming central to the confrontation between the United States and China. It is a battle for technological hegemony involving billions of dollars of public and private funds.

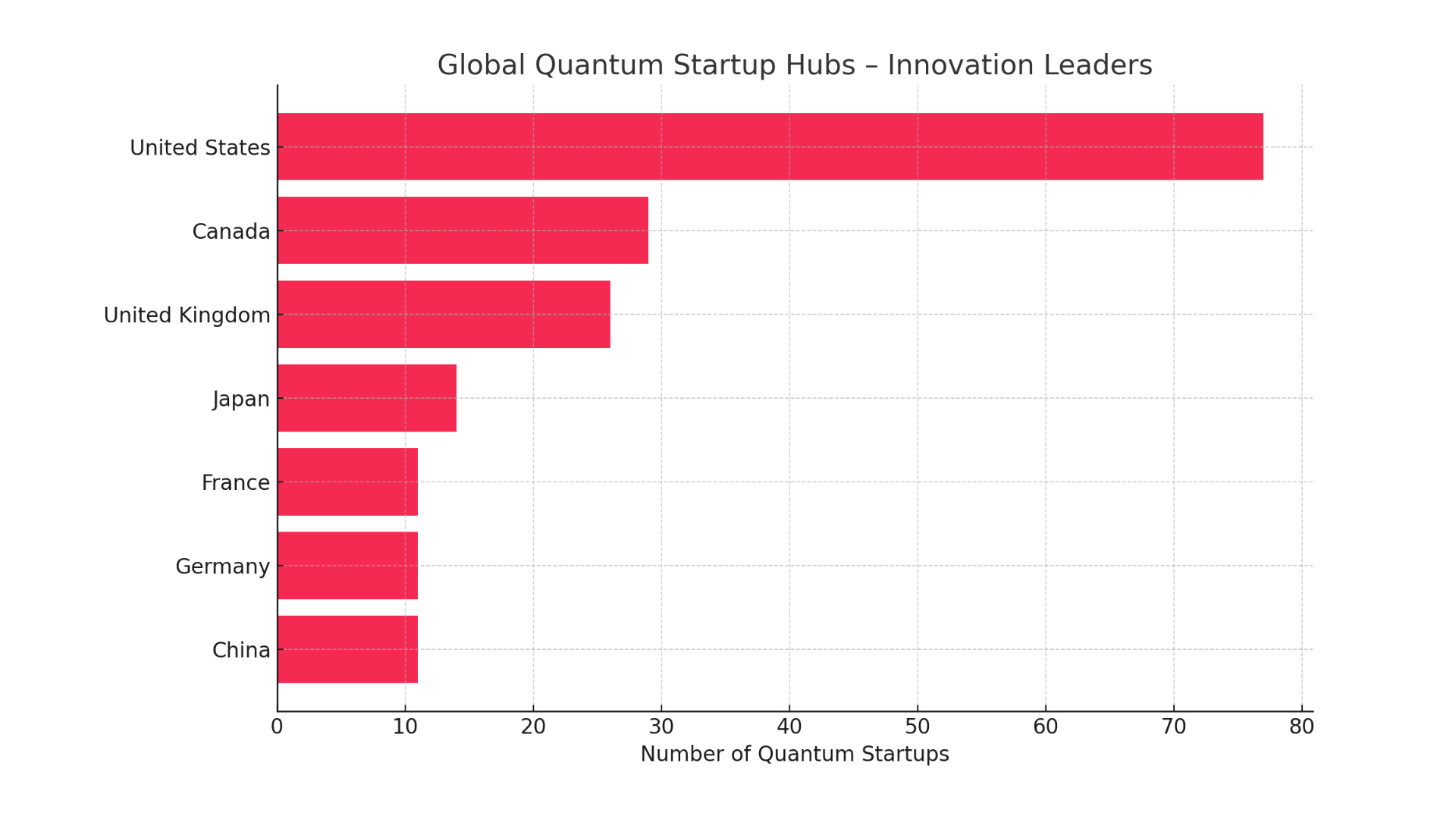

The US leads the way when it comes to the dynamism of the startup ecosystem, with 77 quantum technology companies operating there . This innovation is driven by gigantic private investment and the research power of big tech families.

The federal government also plays a key role by providing significant funding for basic research.

However, China is playing a long-term, fully state-controlled game. They are catching up with shocking speed. According to a report by the Australian Strategic Policy Institute (ASPI), China is already leading in 57 out of 64 key technologies, including such vital areas as quantum sensors.

The Middle Kingdom is pursuing a strategy based on gigantic investments in research infrastructure and aims to achieve dominance in the production of mature chips.

Europe, although a significant player, lags behind the two superpowers in terms of the scale of investment.

Nevertheless, initiatives such as EuroHPC and the strategic positioning of quantum computers in Poland and Germany are evidence of a coordinated effort to maintain competitiveness.

Winners’ trophies: industries on the threshold of tomorrow

Why are governments and corporations investing billions in this technology? The answer lies in the revolutionary applications that await the winners.

Health service and pharmacy: drug design

One of the most difficult problems for classical computers is the precise simulation of complex molecules. Quantum computers are naturally predisposed to simulate such systems. Their use can reduce the time needed to discover and develop a new drug by up to 50-70%.

Pharmaceutical giants such as Roche and Pfizer are actively working with technology companies to prepare for the coming of the quantum era.

Pfizer’s collaboration with technology company XtalPi, using artificial intelligence as a bridge to full quantum computing, has already reduced the time it takes to calculate the crystal structure of molecules from months to just days.

Finance: quantum hedge fund

Financial markets are a world of complex optimisation and risk modelling problems. Quantum algorithms are able to analyse a much larger number of variables and scenarios simultaneously, leading to optimised portfolios and more accurate risk assessment.

Financial institutions such as JPMorgan and BBVA are already running pilot projects in collaboration with IBM and D-Wave . However, this same power also poses an existential threat. A quantum computer of sufficient scale will be able to crack the encryption algorithms that underpin the security of the entire digital economy.

This creates an urgent need to implement so-called post-quantum cryptography.

Materials science and chemistry: engineering the impossible

The creation of new materials today relies heavily on trial and error. Quantum computers are opening the way to ‘custom material design’, enabling the precise simulation of the quantum properties of substances before they are even produced in the laboratory . This could lead to breakthroughs such as superconductors that work at room temperature or catalysts that make industrial processes radically more energy efficient. Companies such as BASF are deeply involved in research, forming partnerships with startups and academic institutions.

From supremacy to advantage: the true measure of victory

Google’s announcement of ‘quantum supremacy’ in 2019 was a milestone, but not a commercial turning point. The problem their computer solved had no practical application . It is therefore crucial to distinguish the terms:

- Quantum Supremacy (Quantum Supremacy): Proof that a quantum computer can beat a classical one at any task, even a useless one. It is a scientific benchmark, but without direct commercial relevance .

- Quantum Advantage (Quantum Advantage): The real goal. Means the ability to solve a useful, real-world business problem faster, cheaper or more accurately than any classical computer .

- Quantum Utility: The pragmatic state we are currently in. It means using today’s imperfect NISQ computers to achieve tangible, though not yet revolutionary, results .

The change in language itself, moving away from the confrontational term ‘supremacy’ to the more practical terms ‘advantage’ and ‘usability’, is symptomatic of the maturity of the industry as a whole. It marks a shift from pure science to commercial applications.

The quantum revolution will not come with a bang. It will be a quiet, creeping transformation. The true measure of victory in this game of thrones will not be supremacy, but utility – the number of problems solved and the value created. The time to prepare is not when the throne is won, but now, when the great families are making their first strategic moves. The game has begun.