For every IT director and owner of a small or medium-sized business in Poland, planning a budget for technology equipment is like playing on two fronts. With one eye, they monitor technological advances and the needs of the company, and with the other – with growing anxiety – they follow the exchange rate charts. This is no coincidence. Fluctuations in the forex markets, especially the US dollar (USD/PLN) exchange rate, have a direct and often brutal impact on the final prices of servers, laptops and components.

When the zloty was at a record low in autumn 2022 and the dollar exchange rate reached 5 zlotys, Polish consumers and companies were in for a shock. Apple’s introduction of new products was associated with price increases of up to 30%. This extreme example exposed a fundamental truth about the Polish IT market: we are an importer of technology and the global supply chain is priced in hard currency.

However, reducing this relationship solely to a simple USD/PLN conversion rate is a mistake that can cost companies tens of thousands of zlotys. Analysis of the market in recent years shows that the invoice price is the product of at least four forces: the dollar exchange rate, the stabilising role of the euro, the global supply of semiconductors and price wars between technology giants.

For Polish SMEs, understanding this complex mechanics and proactively managing risk is no longer an option but is becoming a strategic necessity.

Anatomy of a price: why the server speaks Dollar and the laptop speaks European

To manage costs effectively, it is important to understand why different categories of equipment react differently to exchange rate fluctuations.

Most of the global technology trade, from silicon wafers in Taiwan to finished microprocessors from Intel or AMD, is settled in US dollars (USD). A Polish distributor or integrator, when buying components or servers, pays for them in USD. This means that any increase in the USD/PLN exchange rate almost immediately raises the cost of the purchase. Distributors, wishing to protect their margins, must pass this cost on to the end customer.

The server market is the most sensitive here. Custom-tailored configurations (CTOs), ordered from manufacturers such as Dell or HPE, are often priced directly in USD, leaving the Polish company with an almost 100 per cent exchange rate risk.

The situation is different in the laptop segment. A significant proportion of them come to Poland via European distribution centres located in the euro zone (e.g. in Germany or the Netherlands). The Polish distributor settles accounts with its European supplier in euro (EUR). The EUR/PLN exchange rate becomes a “filter” or “shock absorber” for sudden jumps in the dollar in this model. Laptop prices are thus more stable, but it should be remembered that the price of the euro already includes the USD/EUR exchange rate set by the European headquarters.

There is also the phenomenon of ‘price lag’ (price lag). Distributors hold on to stock they bought at the old, lower exchange rate. Therefore, changes do not always transfer to 1:1 prices. This was perfectly demonstrated at the beginning of 2021: between December 2020 and March 2021, the USD/PLN exchange rate rose by more than 9%, but the average prices of smartphones and tablets rose by “only” 4% during this period. The market temporarily absorbed some of the hit, giving companies a brief ‘window’ to buy before the new, more expensive supply arrived.

Server market trap 2024/2025: a missed SME opportunity

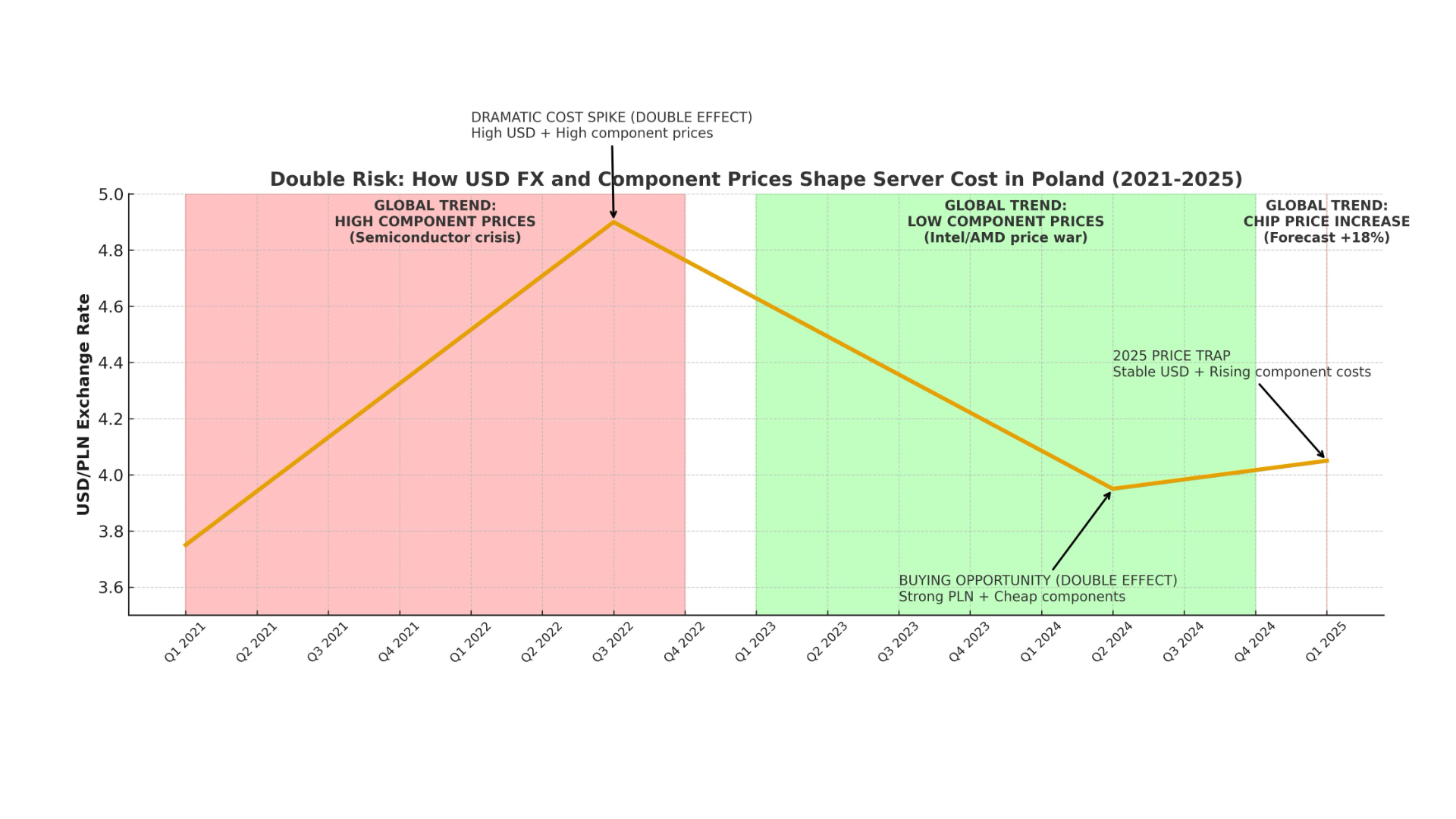

Analysis of the server market reveals a key and risky paradox into which many Polish companies have fallen. The year 2024, paradoxically, was theoretically the best time in years to upgrade infrastructure. Two key factors contributed to this:

- Strong zloty: In 2024, a ‘weaker dollar’ was recorded, significantly reducing the cost of importing equipment priced in USD.

- Global price war: At the same time, there was a brutal battle for market share between Intel and AMD. This led to gigantic price cuts on key server processors (Xeon and EPYC), reaching up to 35-50% below list prices in the US market.

A strong currency and cheap underlying components – a textbook ‘buying window’. Despite this, market data shows that the Polish IT equipment market has declined in 2024 (value in USD fell from 10.03 billion to 9.39 billion). Companies, probably due to the general macroeconomic situation and high interest rates, have halted investments.

Now these companies could fall into a trap. Companies that have waited out 2024 in the hope of further declines will face a much worse situation in 2025. Forecasts for the beginning of 2025 show an 18 per cent increase in average chip prices and a renewed extension of lead times to more than four months. Trying to ‘wait it out’ has proved to be a strategic mistake – these companies will be forced to buy equipment more expensively and with longer lead times.

Noise in the data: when the exchange rate goes down

Analysis of IT prices solely through the prism of currencies is incomplete. There are factors that periodically become more important.

The first is the availability of semiconductors. The 2021-2022 crisis has shown that price is becoming secondary to the ability to buy. What’s more, this crisis has generated a massive implicit currency risk. If the average waiting time for a server is more than four months, a Polish company placing an order in January (at an exchange rate of PLN 4.00) with a payment deadline in May, may have to pay 10% more if the exchange rate rises to PLN 4.40 in the meantime.

The second factor is geopolitics. Customs decisions, such as those imposed by the US on Chinese imports, force manufacturers (Dell, HP, Lenovo) to costly relocate factories, for example to Vietnam. The costs of this global reorganisation of the supply chain are included in the base price of the product, raising it for everyone, regardless of local exchange rates.

How can SMEs protect themselves?

For Polish companies, passivity towards currency risk is a gamble. Instead of trying to predict the perfect ‘hole’ (which, as 2024 has shown, is almost impossible), companies need to implement conscious risk management strategies.

1. purchase planning based on cycles, not ‘timing’: Instead of guessing, IT and finance departments should monitor both key indicators: the local USD/PLN exchange rate and global component price trends (e.g. CPU price wars). The budget should be flexible enough to accelerate key purchases when both indicators are favourable.

2 Active management of currency risk (Hedging): Hedging instruments, hitherto seen as the domain of large corporations, are now also available to SMEs.

- Forward contracts: This is the simplest tool. If a company knows that it needs to buy $50,000 worth of equipment in 3 months’ time, it can ‘freeze’ today’s rate in a contract with the bank. This eliminates the risk, although it also removes the benefit if the rate falls.

- Currency options: They act as an ‘insurance policy’. The company pays a small premium for the right (but not the obligation) to buy the currency at a fixed rate. If the market rate is better – it benefits from the market. If worse – it exercises the option, protecting itself against loss.

- Natural hedging: the simplest method for companies that have revenues in USD or EUR (e.g. from exporting IT services). It involves paying for imported equipment in the currency you have earned, thus bypassing currency conversion costs altogether.

3 Building supply chain resilience: the risks for 2025 (more expensive chips, longer deliveries ) show that SMEs need to think not only about their risks, but also those of their suppliers. It is worth actively talking to local IT integrators. The key question is: does the supplier have diversified sources?

The best strategy for SMEs may be to sign a framework agreement with a supplier for the cyclical delivery of equipment (e.g. 50 laptops per quarter) at a fixed price of PLN for 12 months. In this way, it is the supplier, who is much better equipped for professional hedging, who assumes the currency risk (USD/PLN) and the price risk of the components(projected increase of 18% ). Such an agreement provides invaluable predictability of operating costs.

In a volatile economic environment, IT currency risk management is no longer the responsibility of the finance department. It is becoming a key element of a company’s technology strategy.