We live in an age of fundamental paradox. On the one hand, artificial intelligence, driven by large language models (LLMs), is becoming the lifeblood of modern business, promising unprecedented innovation.

On the other hand, its insatiable appetite for data clashes head-on with the global privacy rush. This conflict is no longer just a matter of ethics, but a hard regulatory reality that is creating and transforming entire technology markets before our eyes.

Public sentiment has reached critical mass. Research shows that as much as 86% of the US population expresses growing concern about how their data is being processed, and more than half believe AI will make it harder to protect personal information.

In response, governments around the world are building a legislative wall. What started with the groundbreaking RODO (GDPR) in Europe has quickly spread globally, creating a dense web of legislation, from the CCPA in California to the LGPD in Brazil.

Today, more than 137 countries already have national data protection laws, covering almost 80% of the world’s population.

The stakes in this game are astronomical. Regulators do not hesitate to use their most powerful weapon: financial penalties. The record €1.2 billion fine imposed on Meta for data transfers between the EU and the US or the €746 million fine for Amazon are powerful signals to the market.

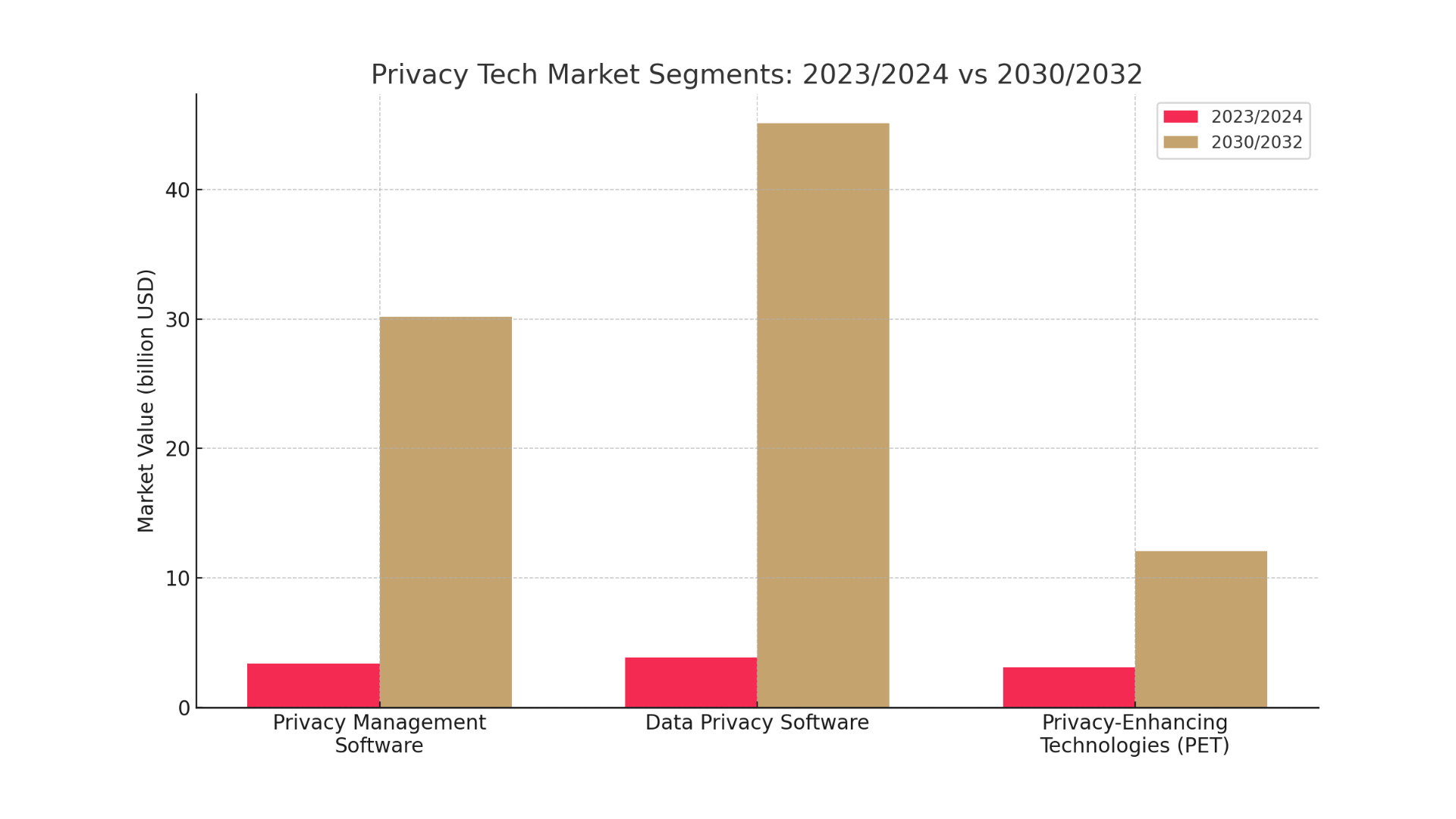

Any such decision is a direct growth stimulus for the ‘Privacy Tech’ sector – a market that has not grown organically out of consumer needs, but has been almost entirely created by legislative action.

The law does not just regulate technology – it creates it. In this new landscape, a key conclusion emerges: the tool that created the problem – artificial intelligence – is simultaneously becoming the key to solving it.

We are entering the era of ‘Privacy 2.0’, in which compliance becomes intelligent, proactive and, in retrospect, autonomous.

From manual work to intelligent automation

Prior to the era of RODO, privacy management in many organisations was based on manual data mapping, endless spreadsheets and tedious processes for responding to user requests (DSARs).

The cost of this inefficiency was huge – it was estimated that manually handling a single DSAR request cost an average of more than $1,500. In a world where companies process petabytes of data, such a model was untenable.

Artificial intelligence (AI) has become the engine that is driving a revolution in this area, transforming privacy management platforms into intelligent command centres. Modern systems are using AI to automate key, once manual processes.

AI algorithms scan a company’s entire infrastructure, from local servers to the cloud, for personal data, understanding its context and creating a dynamic map in real time. AI models then analyse data flows and access permissions to proactively identify and assess risks, alerting to potential privacy by design violations.

AI also automates the entire user consent lifecycle and DSAR request fulfilment, reducing processes from weeks to hours.

The financial impact of this transformation is measurable. Organisations that make extensive use of AI and automation in the security space save an average of $1.76 million in data breach costs compared to companies that do not.

This is hard evidence of the return on investment of smart privacy management platforms that turn the cost of compliance into operating profit.

The trust frontier: The world of privacy-enhancing technologies (PETs)

Automation, however, is only the beginning. The real revolution is taking place at the border of cryptography and advanced mathematics, in the world of Privacy-Enhancing Technologies (PETs).

It is a set of tools aiming to achieve the ‘holy grail’ of analytics: the ability to extract valuable information from sensitive sets without revealing the data itself.

One of the key technologies is homomorphic encryption (HE). It allows calculations to be performed on encrypted data, as if the analyst were performing operations on a closed box without seeing its contents.

Only the owner of the data, who holds the key, can open the box and see the result. The technology, which is being developed by giants such as Microsoft and IBM, is being used in medicine to analyse patient data from multiple hospitals and in finance to detect fraud together.

Another groundbreaking tool is zero-knowledge proof (ZKP). This is a cryptographic protocol that allows you to prove that you know a certain piece of information without revealing it yourself.

It’s like being able to prove you are over 21 without showing an ID card with your date of birth and address. ZKP is revolutionising decentralised identity and private financial transactions.

The problem of analysing data on distributed, private sets is solved by differential privacy and federated learning. Differential privacy involves adding precisely calculated ‘noise’ to a dataset that prevents the identification of a single individual, while preserving overall statistical trends.

In contrast, federated learning is an approach in which AI models are trained directly on end devices (e.g. smartphones) and only aggregated, anonymised model ‘enhancements’ are sent to a central server, rather than raw user data.

Giants such as Apple and Google are already using these techniques.

The deployment of these technologies signals a fundamental shift. Data is no longer an asset whose value lies in exclusive ownership. It is becoming a resource that can be securely shared and collaborated on, unlocking the enormous economic value that was previously trapped in corporate silos. Privacy becomes not a barrier, but a technology that enables innovation.

The endgame: the dawn of autonomous privacy

The evolution so far sets out a clear trajectory, the logical culmination of which is a vision of the future in which data protection is managed by autonomous AI systems. A distinction must be made here between automation and autonomy.

Automation performs defined tasks. Autonomy is the ability of a system to learn, adapt and make decisions on its own to achieve a goal.

Such a system of the future will be based on the convergence of several technologies. The foundation is autonomous databases that use AI to become self-governing, self-securing and self-repairing.

This is the basis for a new generation of agent-based AI – systems that can autonomously interact with databases and perform complex tasks to achieve a goal, such as ‘ensure continuous compliance with global regulations’.

The nervous system is an intelligent data pipeline that filters and edits personal data in real time before it goes to analysis.

The combination of these elements paints a picture of a future in which an autonomous system will continuously monitor the global legal landscape, automatically translate legal language into enforceable policies and reconfigure data flows across a company’s infrastructure in real time.

It will also autonomously detect and neutralise potential breaches before they can escalate.

This technological trajectory leads to the inevitable ‘commoditisation of compliance’, where core tasks will become a universally available service. However, this does not mean the end of the privacy professional’s profession. On the contrary, its role will be transformed – from operational ‘firefighting’ to strategic oversight and ethics management of autonomous systems.

In this new reality, the key competences will no longer be just interpreting the law, but auditing algorithms and defining operational boundaries for AI agents.

Privacy 2.0 is not an end in itself. It is the operating system for the future of the digital economy.