The development of artificial intelligence is not just about computational advances. Training linguistic and generative models requires thousands of GPU/TPU accelerators that devour tens of megawatts of power. As a result, electricity consumption in data centres is increasing – in Ireland, data centres consumed as much as 22% of the country’s total energy in 2024. Such a share is a challenge for energy suppliers and DC operators, who need to fit growing demand into increasingly expensive price tags while reducing CO₂ emissions.

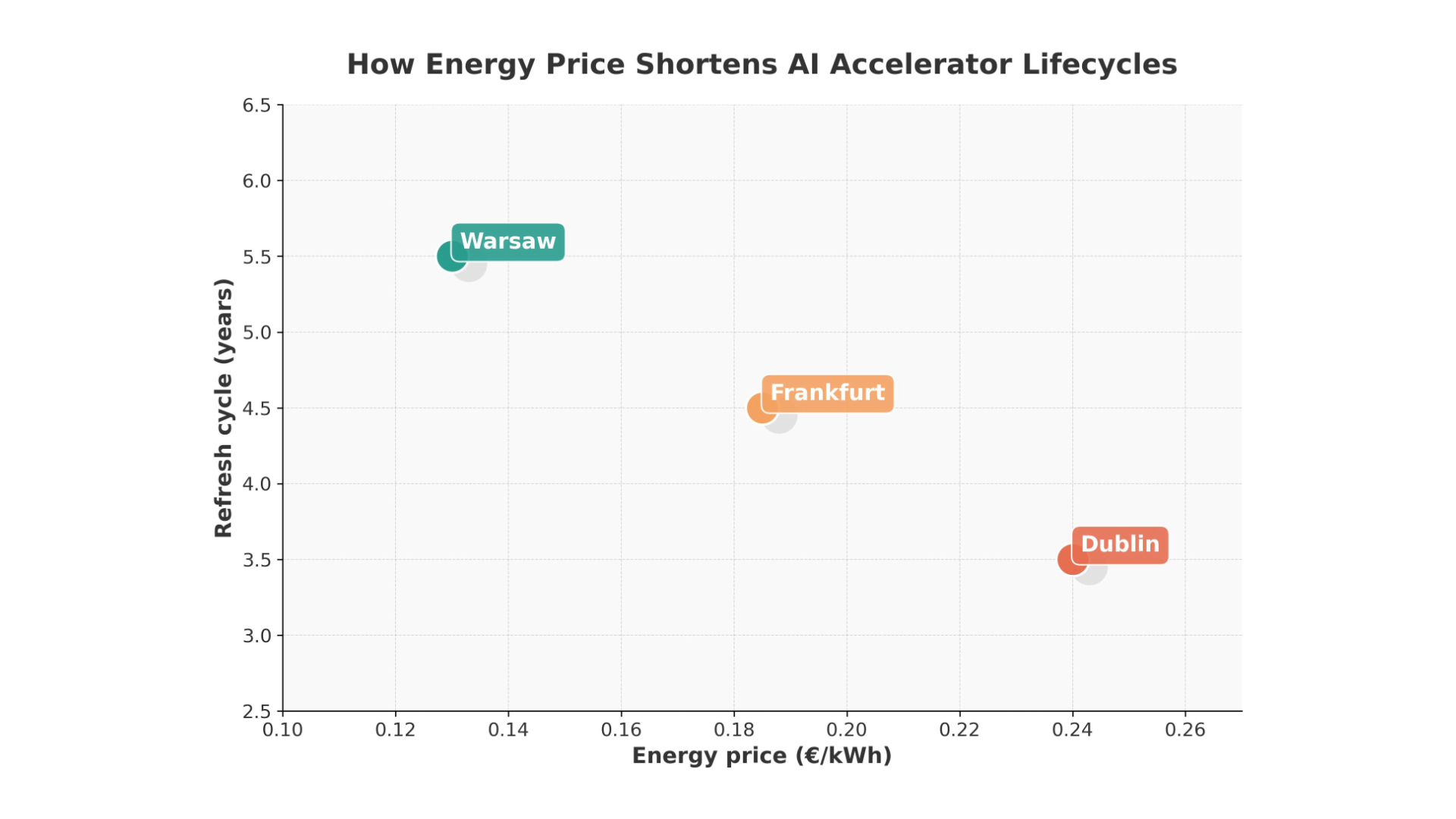

This article compares energy prices in three key European data hubs – Frankfurt, Dublin and Warsaw – with the energy efficiency of successive generations of AI accelerators. On this basis, we analyse how operational costs and technological advances shorten or lengthen the lifecycle of AI hardware .

Energy prices in different hubs

Frankfurt: high prices and environmental requirements

Frankfurt is the second largest data centre market in Europe. Germany has some of the highest industrial energy prices; in 2024, companies paid an average of 16.77 cents/kWh, with the rate rising to 17.99 ct/kWh in January 2025. For companies with concessions (fixed consumption), the cost was 10.47 ct/kWh. These charges are made up of 29% taxes and charges and 27% network charges.

A strong focus on RES energy and heat recovery is obliging data centre operators to invest in sustainable solutions. High energy costs motivate rapid deployment of more efficient systems to reduce consumption per teraflops.

Dublin: the most expensive electricity in the EU and supply constraints

In Ireland, energy prices for industrial consumers are among the highest in Europe – around €26 per 100 kWh in the first half of 2024. The SEAI report shows that in 2024 the weighted average price for business was 22.8 cents per kWh, with large consumers paying 16.3 c/kWh. The high rates are compounded by a shortage of power – Dublin’s data centres consume 22% of the country’s energy and EirGrid predicts this will rise to 30% by 2030. For this reason, new connections are only approved in exceptional cases, so operators must maximise the efficiency of existing infrastructure.

Warsaw: lower prices but a growing market

Poland stands out with lower prices – around €0.13 per kWh in 2024. According to GlobalPetrolPrices, in March 2025, businesses will pay an average of PLN 1.023/kWh (US$0.28), which is still lower than in Germany or Ireland. While lower energy costs allow for a longer amortisation cycle, increasing competition and demand for cloud services are encouraging investment in new hardware to increase computing density.

Generations of accelerators: performance per watt

GPU – from Volta to Blackwell

Nvidia’s V100 (Volta) introduced tensor cores technology in 2017, but its TDP of 300 W and lower TFLOPS/W ratio are no longer viable. In 2020, the A100 (Ampere) came to market with a TDP of 400 W and doubled the performance per watt, reaching up to 10 TFLOPS/W. Another breakthrough was the 2022 H100, using the Hopper architecture: a 700 W chip delivers 20 TFLOPS/W and about three times the workload of the A100 per watt.

In 2024, Nvidia announced the H200, a chip with a TDP of 700 W and featuring HBM3e memory with a bandwidth of 4.8 TB/s. This increased inference performance by 30-45% for the same power consumption. The DGX H200 system with eight such GPUs consumes 5.6 kW, but can do twice as much work per watt compared to its predecessor.

The B200 (Blackwell), with a TDP of 1000 W and three times the computing power of the H100, is expected to debut in 2025. Although power consumption is increasing, the TFLOPS/W ratio continues to improve, pushing the frontier of computing density.

TPU – an alternative with improved energy efficiency

Google is developing Tensor Processing Units, dedicated AI accelerators. TPU v4 offers 1.2-1.7 times better performance per watt than the A100, and in general TPUs are 2-3 times more power efficient than GPUs. Upcoming generations, such as v6 ‘Trillium’ and v7 ‘Ironwood’, focus on maximising compute density while reducing power consumption.

Equipment life cycle – flexibility instead of rigid cushioning

In traditional data centres, hardware was replaced every five to seven years. However, decarbonisation research indicates that in AI environments, cycles of four years or longer are economically viable, although shortening the cycle can reduce emissions. When a new generation of GPUs provides several times the energy efficiency, early retirement of ageing chips is justified – the energy savings and emissions cost reductions outweigh the investment. Replacement every 4-5 years may become the norm in regions with high energy prices.

How does the price of electricity affect decisions to upgrade?

Dublin – need for computing density

With prices of 22-26 cents per kWh and limited network capacity, Irish data centres are being forced to maximise efficiency. An investment in an H100 or H200 pays for itself faster with twice the performance per watt. Replacing old A100s with H100/H200s reduces the amortisation cycle to three to four years, as the energy savings and lower emissions costs outweigh the capital expenditure. The introduction of even more energy-efficient chips (B200, TPU v6) can further accelerate the upgrade.

Frankfurt – a trade-off between cost and investment

German energy prices (17-20 ct/kWh) are lower than in Ireland, but still motivate optimisation. Companies are keen to replace equipment every 4-5 years, especially when the gap between generations is large. At the same time, larger systems can benefit from discounts and long-term contracts, which reduces the pressure for immediate replacement. Regulations requiring the use of RES and heat recovery encourage the choice of energy-efficient platforms.

Warsaw – a longer breath, but growing ambitions

The lower cost of energy (around 13 ct/kWh) allows Polish operators to extend the life cycle of their equipment. Replacing the V100 with the A100 or H100 still brings savings, but they are not as spectacular as in Ireland. However, the growing demand for AI services, the development of R&D offices in Poland and competition from international players may shorten replacement cycles to 4-5 years, especially when B200s and energy-efficient TPUs appear on the market.

Trends of the future: HBM3e memory, Blackwell architecture and TPU Trillium

Accelerator performance is not only increasing with more cores. New chips, such as the H200, increase memory bandwidth to 4.8 TB/s via HBM3e. Another leap is the Blackwell B200 with a TDP of 1000W, which uses wider buses and improved Transformer Engine cores. Google, in turn, is developing the v6 ‘Trillium’ and v7 ‘Ironwood’ TPUs to improve power efficiency and compute density.

Efficiency per watt is becoming the most important parameter as economic and regulatory pressures force operators to reduce emissions. High energy prices in Europe further exacerbate this trend.

Differences in energy prices across Europe determine AI infrastructure modernisation strategies. Ireland and Germany, with the highest rates, are shortening equipment lifecycles to reduce operating costs. Poland, benefiting from lower prices, can afford to use existing systems for longer, although growing demand and competition will also accelerate change there.

Technological advances – from the V100 GPU, to the A100 and H100, to the H200 and the upcoming B200 – mean that the TFLOPS/W ratio is growing exponentially. Alternative TPU accelerators are showing even greater energy efficiency, which could change GPU dominance in the future. Therefore, hardware replacement decisions cannot be rigid; they must take into account not only the cost of new hardware, but also energy prices, CO₂ emissions and customer requirements. Megawatts and teraflops will become increasingly intertwined in the strategies of data centre operators in the coming decade.